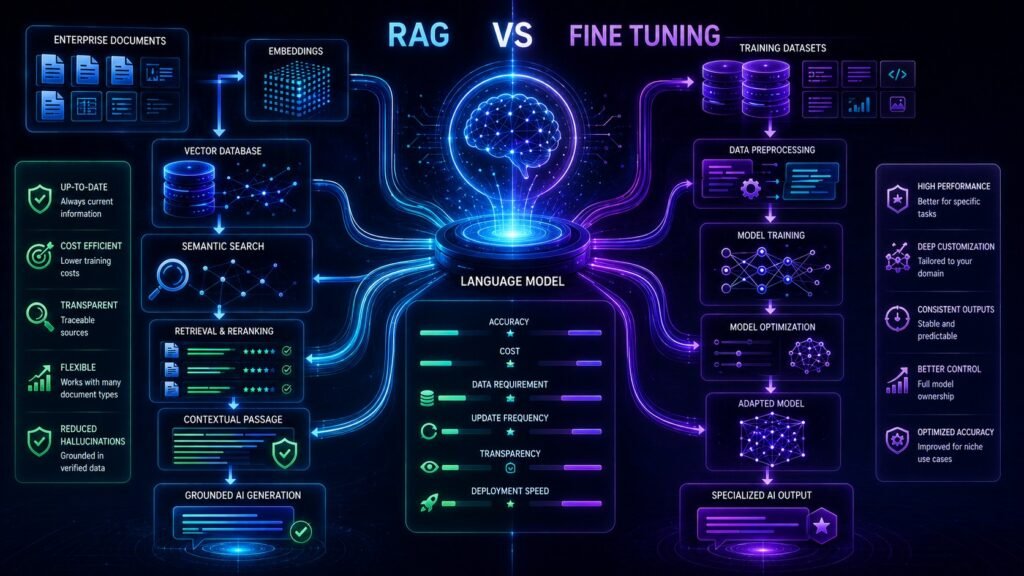

RAG vs Fine Tuning: Complete AI Comparison Guide

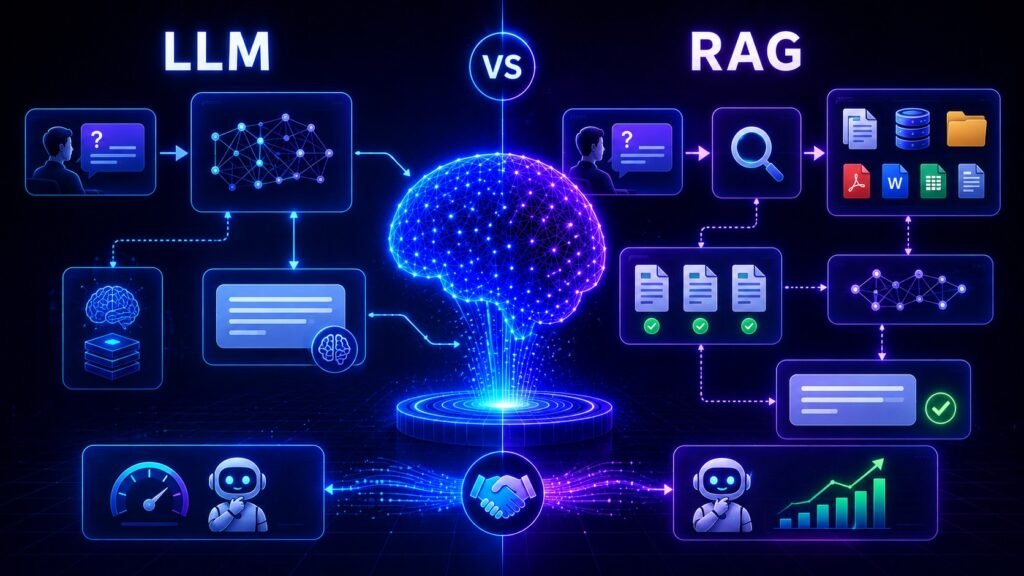

RAG vs Fine Tuning: Which AI Customization Method Is Better? Modern enterprise AI systems increasingly depend on Large Language Models to power: AI assistants customer support copilots enterprise search systems document intelligence platforms legal AI systems healthcare AI applications coding assistants workflow automation systems However, organizations quickly face a major challenge after adopting Large Language […]

RAG vs Fine Tuning: Complete AI Comparison Guide Read More »