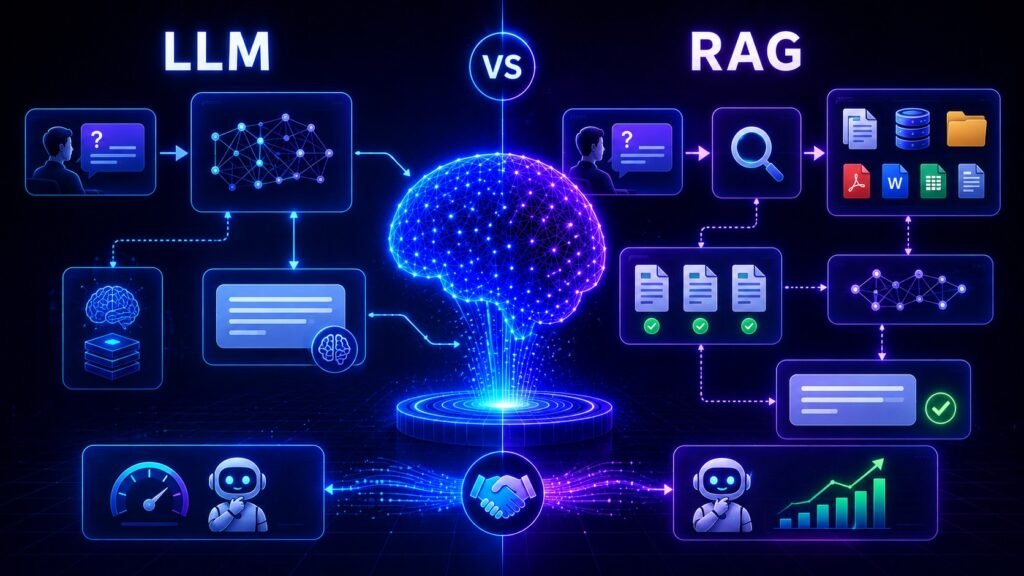

LLM vs RAG: Key Differences Explained Simply

LLM vs RAG: What’s the Difference and Which One Should You Use in 2026? As AI adoption grows, many people hear two common terms: LLM and RAG. They are related, but not the same. Some teams assume RAG replaces LLMs. Others think they are competing technologies. In reality, they usually work together. This guide explains […]

LLM vs RAG: Key Differences Explained Simply Read More »