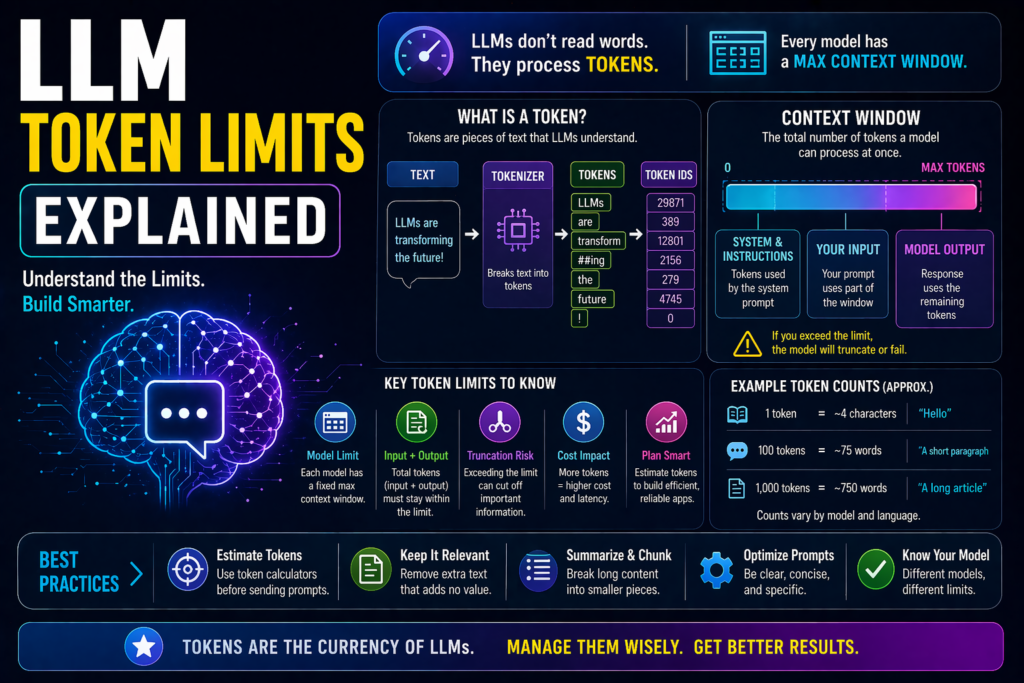

LLM Token Limits Explained: What They Mean and Why They Matter

When using AI tools, you may hear terms like tokens, token limits, context size, or maximum input length. These are important because they affect how much text an AI model can read, remember, and generate.

If an AI tool ever says your prompt is too long, cuts off an answer, or forgets earlier parts of a conversation, token limits are often the reason.

This guide explains LLM token limits in simple language.

In simple terms

A token limit is:

The maximum number of text units an LLM can process in one request.

These text units are called tokens.

Tokens may include:

- full words

- parts of words

- punctuation

- numbers

- symbols

- formatting characters

Think of tokens as the model’s counting system for language.

Why LLM Token Limits Matter

Token limits affect:

- prompt length

- conversation memory

- file analysis size

- output length

- speed and cost

- response quality

The more tokens used, the more resources are required.

What is a Token?

AI models usually do not count words directly.

They break text into smaller chunks called tokens.

Examples:

- “Hello” may be one token

- “unbelievable” may become multiple tokens

- punctuation like commas may count too

So 1,000 words does not always equal 1,000 tokens.

Prompt Tokens vs Output Tokens

Most AI requests include two token categories.

Input Tokens

These include:

- your prompt

- previous chat history

- system instructions

- uploaded text

Output Tokens

These are the tokens generated in the response.

Both usually count toward total limits.

Example of Token limits in Action

Suppose a model supports a certain maximum token capacity.

If your request includes:

- long conversation history

- large pasted article

- detailed instructions

There may be less room left for the answer.

That can lead to shorter or incomplete outputs.

Token Limits vs Context Window

These terms are related.

Token Limit

The numeric cap on tokens.

Context Window

The total working space where tokens fit.

In practice, token limits help define the usable context window.

Why long chats lose memory

As conversations grow, earlier messages also consume tokens.

When limits are reached, systems may:

- remove old messages

- summarize earlier chat

- prioritize recent messages

- reduce retained detail

That is why AI sometimes forgets old context.

Why businesses care about Token Limits

Companies using AI for operations need to manage token usage for:

- customer support chats

- document analysis

- meeting summaries

- research workflows

- coding assistants

- enterprise search tools

Better token efficiency often means lower cost and faster responses.

Real examples where Token Limits Matter

1.Long PDF Summaries

Large reports may need chunking.

2.Coding Projects

Big codebases can exceed limits.

3.Multi-step Strategy Work

Long prompts may reduce output space.

4.Support Conversations

Very long histories may lose details.

5.RAG Systems

Retrieved documents use token budget too.

Popular AI companies improving token capacity

Many providers continue expanding long-context capabilities, including:

Longer context is a major competitive area.

How to work within LLM Token Limits

1.Use concise prompts

Remove unnecessary text.

2.Break large tasks into steps

Use multiple smaller prompts.

3.Summarize previous context

Replace long history with summaries.

4.Send only relevant content

Avoid dumping entire documents if not needed.

5.Ask for structured answers

Focused outputs use fewer tokens.

Do bigger token limits always mean better AI?

Not always.

A model may have:

- huge token capacity but average reasoning

- smaller capacity but stronger answers

Token size matters, but model quality matters too.

Common beginner mistakes

- confusing tokens with words

- using overly long prompts

- forgetting outputs also use tokens

- assuming memory is unlimited

- sending irrelevant background text

Token limits and cost

Many AI pricing systems are linked to token usage.

More tokens can mean:

- higher API cost

- slower responses

- larger compute demand

That is why businesses optimize prompts carefully.

Suggested Read:

- LLM for Beginners

- LLM Context Window Explained

- How LLMs Work

- LLM Explained Simply

- LLM Training vs Inference

- Prompt Engineering Explained Simply

FAQ: LLM Token Limits

What are LLM token limits?

The maximum number of tokens an AI model can handle in one request.

Are tokens the same as words?

No. Tokens may be full words or parts of words.

Why does AI stop mid-answer?

The response may hit output token limits.

Why does AI forget old chat messages?

Older messages may be removed when token capacity fills up.

Should I always use long prompts?

No. Clear, focused prompts usually work better.

Final takeaway

LLM token limits control how much text an AI model can process and generate at one time. They influence memory, prompt length, output size, speed, and cost.

If you understand token limits, you can prompt smarter, reduce waste, and get better results from AI tools.