How LLMs Work: Simple Beginner Guide to Large Language Models

Large Language Models (LLMs) power many of today’s most popular AI tools. They can answer questions, write content, summarize documents, generate code, and hold natural conversations.

But many people still ask one important question:

How do LLMs actually work?

This guide explains how LLMs work in simple language without unnecessary technical jargon.

In simple terms

LLMs work by learning patterns from massive amounts of text and then predicting the most useful next words when you give them a prompt.

Think of them as advanced prediction engines trained on language.

They do not “think” like humans. Instead, they calculate likely outputs based on training and context.

What is an LLM?

LLM stands for Large Language Model.

It is:

- Large because it is trained on huge datasets and many parameters

- Language because it works with text, speech transcripts, and code

- Model because it is a trained AI system based on neural networks

Popular LLM tools are created by companies such as:

- OpenAI

- Anthropic

- Meta

- Mistral AI

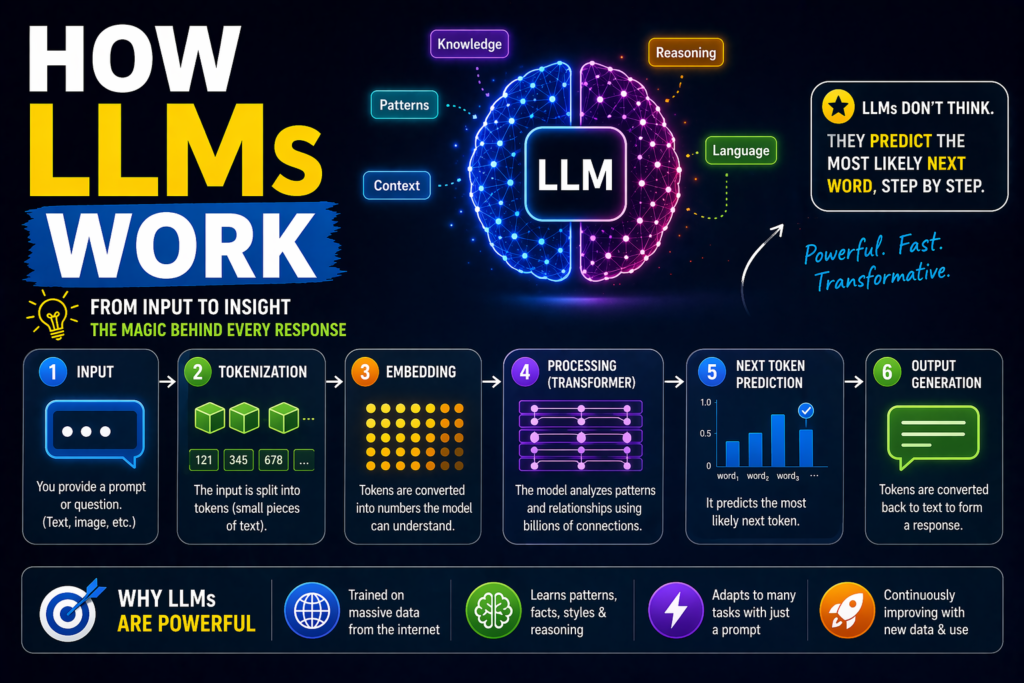

Step-by-step: How LLMs work

1.Training on huge amounts of text

Before an LLM can answer users, it is trained using enormous collections of text such as:

- books

- articles

- websites

- documentation

- code repositories

- public datasets

During training, the model studies patterns in language.

It learns:

- grammar

- spelling

- sentence structure

- facts and concepts

- writing styles

- relationships between words

2. Breaking text into tokens

LLMs do not read text exactly like humans.

They break text into smaller units called tokens.

For example:

“Artificial intelligence is useful”

May become smaller text pieces processed by the model.

Tokens can be:

- words

- parts of words

- punctuation

- symbols

This helps the model analyze language mathematically.

3.Using neural networks

LLMs use deep neural networks with many layers.

These layers detect relationships between tokens and patterns.

For example, the model learns that words like:

- doctor

- hospital

- patient

are often related.

Or:

- code

- Python

- function

belong to programming contexts.

This layered learning creates language understanding patterns.

4.Understanding your prompt

When you type:

“Explain cloud computing simply”

The model processes:

- meaning of cloud computing

- your request for simplicity

- expected explanation style

- previous conversation context

This helps shape the response.

5. Predicting the next token

This is the core process.

The LLM predicts the most likely next token repeatedly.

Example:

“Cloud computing means using…”

Then predicts:

- internet

- remote

- online

- servers

One token at a time, very quickly.

This repeated prediction builds full sentences.

6. Returning the final response

After many rapid token predictions, you receive a complete answer.

That is why responses appear instant.

Why responses feel intelligent

LLMs often seem intelligent because they combine:

- strong language fluency

- pattern recognition

- memory of conversation context

- broad topic exposure

- reasoning-like output patterns

However, this is not human consciousness.

It is advanced statistical language generation.

What is a context window?

A context window is the amount of text an LLM can consider at one time.

This may include:

- your current prompt

- previous chat messages

- uploaded text

- system instructions

Larger context windows help with long documents and ongoing conversations.

What affects LLM output quality?

Prompt quality

Better prompts create better answers.

Instead of:

“Write something.”

Use:

“Write a professional LinkedIn post about AI productivity in 120 words.”

Context quality

Good input leads to better output.

Model quality

Different models have different strengths.

Settings

Temperature and other controls can affect creativity and consistency.

Can LLMs make mistakes?

Yes.

Common issues include:

Hallucinations

Invented or false facts.

Misunderstanding prompts

Especially vague requests.

Outdated information

If not connected to current data.

Math or logic errors

Possible in complex cases.

That is why human review matters.

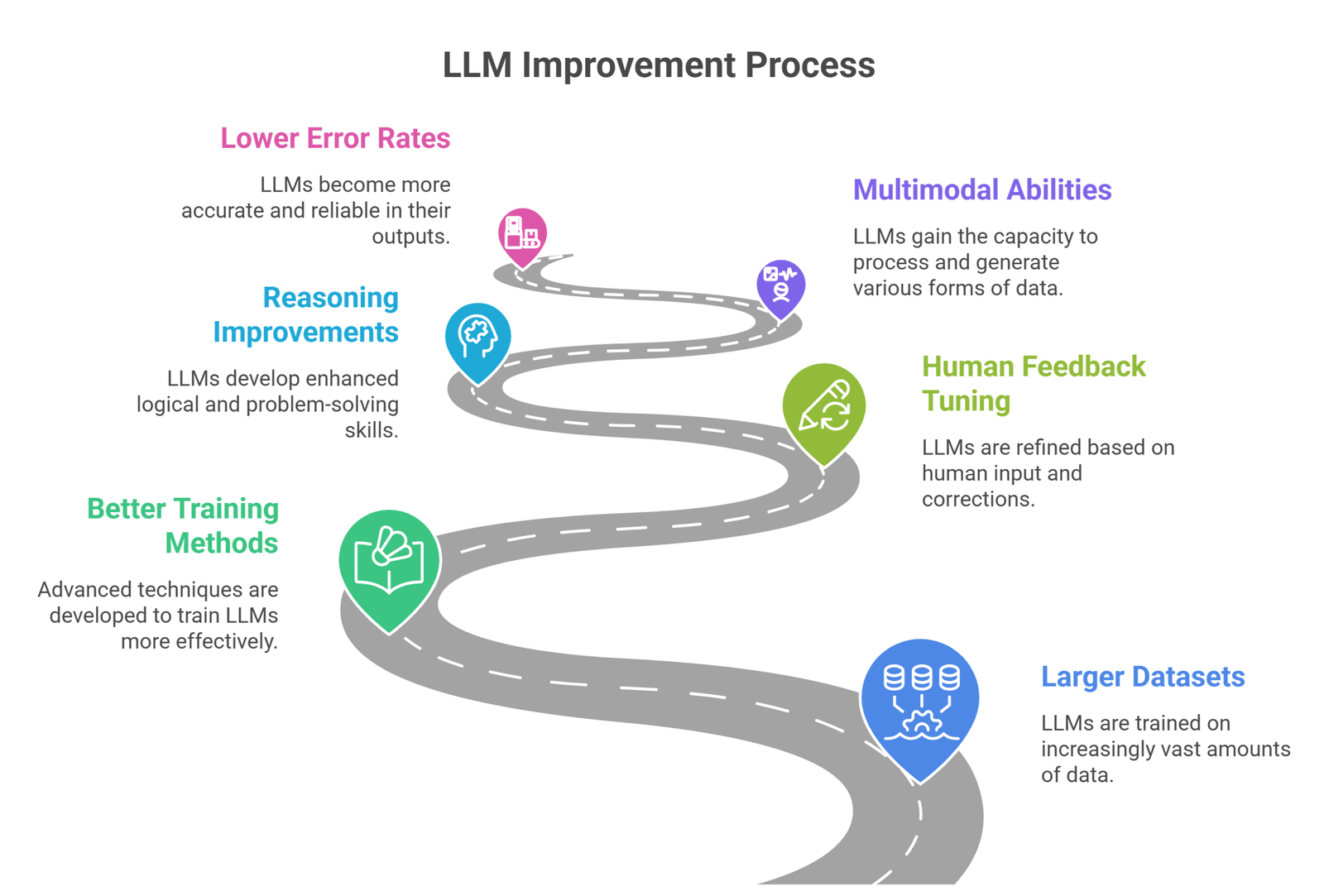

How LLMs improve over time

Modern LLMs improve through:

- larger datasets

- better training methods

- human feedback tuning

- reasoning improvements

- multimodal abilities

- lower error rates

Each generation becomes more useful.

Real-world uses: How LLMs work

Because they predict and generate language well, LLMs are used for:

- chatbots

- customer support

- writing tools

- coding assistants

- search summaries

- tutoring systems

- workflow automation

LLM vs Search Engine

| Feature | LLM | Search Engine |

| Direct answers | Yes | Sometimes |

| Conversation | Yes | Limited |

| Creativity | High | Low |

| Live web info | Depends | Strong |

| Summarization | Strong | Moderate |

Many users combine both.

Best way for beginners to use LLMs

Be specific

Clear prompts work best.

Add context

Audience, tone, goal.

Iterate

Refine prompts for better answers.

Verify facts

Especially important topics.

Suggested Read:

- LLM for Beginners

- LLM Explained Simply

- What Is Generative AI? Complete Beginner Guide

- Prompt Engineering Explained Simply

- Hallucination Reduction Prompts

- How AI Agents Work Explained

FAQ: How LLMs Work

How do LLMs work simply?

They learn patterns from text and predict useful next words based on prompts.

Do LLMs think like humans?

No. They generate outputs using learned patterns.

Why are LLMs called large?

Because they use large datasets and many parameters.

Can LLMs learn after every chat?

Not usually in the same way humans learn continuously.

Are LLMs always correct?

No. Verification is important.

Final takeaway

LLMs work by learning language patterns from massive text datasets and generating responses one token at a time. That simple core idea powers many of the world’s most advanced AI tools.

Once you understand how LLMs work, it becomes much easier to use AI effectively.