Why LLMs Hallucinate: Causes, Examples & How to Reduce It

Large Language Models (LLMs) can answer questions, summarize reports, generate code, and write content in seconds. But they also have a known weakness:

Sometimes they produce answers that sound confident—but are wrong.

This behavior is called hallucination.

Understanding why LLMs hallucinate is essential for users, developers, and businesses deploying AI tools.

This guide explains hallucinations in simple language, including causes, examples, and practical ways to reduce them.

In simple terms

LLM hallucination means:

When an AI model generates false, misleading, or invented information as if it were true.

Examples:

- fake facts

- invented citations

- wrong summaries

- imaginary APIs in code

- incorrect calculations

- made-up product details

The answer may sound fluent, even when incorrect.

Why Hallucinations Happen

LLMs are not databases. They are prediction systems.

They generate the most likely next words based on patterns learned during training.

That means they optimize for:

- plausible language

- coherent responses

- pattern completion

They do not automatically verify truth before answering.

Easy analogy

Imagine a student who has read millions of books but is taking a test without internet access.

They remember patterns well, but if unsure, they may guess confidently.

That is similar to how hallucinations happen.

Main Reasons LLMs Hallucinate

1. Missing Knowledge

If the model lacks reliable knowledge on a topic, it may guess.

Especially common with:

- recent news

- niche subjects

- private company data

- rare technical issues

2. Prediction Over Verification

The model predicts text sequences, not truth labels.

Good grammar does not equal factual accuracy.

3. Ambiguous Prompts

Vague prompts create vague answers.

Example:

“Tell me about Mercury.”

Could mean:

- planet

- element

- car brand

- mythology

4. Overconfidence in Language Style

LLMs are trained to sound natural and helpful.

Sometimes that creates confident wording around uncertain content.

5. Weak Retrieval Systems

In RAG or search-connected systems, poor document retrieval can still lead to wrong answers.

6. Long Context Confusion

Very long conversations or documents may increase mistakes.

Real-world Examples of LLM Hallucinations

Wrong Facts

Incorrect dates, names, or statistics.

Fake Sources

Invented research papers or URLs.

Coding Errors

Non-existent functions or outdated syntax.

Legal Mistakes

Incorrect citations or precedent claims.

Business Summaries

Missing critical context or fabricating trends.

Why LLM Hallucinations Matter

For casual use, errors may be harmless.

For serious use, they can cause:

- financial mistakes

- legal risk

- poor decisions

- customer trust loss

- engineering bugs

- misinformation spread

That is why verification matters.

Which AI ecosystems work on reducing this?

Many providers actively improve reliability, including:

Reducing hallucinations is a major industry focus.

How to Reduce LLM Hallucinations

1. Use Better Prompts

Be specific.

Instead of:

“Explain taxes.”

Use:

“Explain basic income tax filing for freelancers in India.”

2. Ask for Sources

Request citations or evidence.

3. Use RAG Systems

Connect the model to trusted documents.

4. Break Complex Tasks into Steps

Smaller tasks reduce confusion.

5. Use Human Review

Critical outputs should be checked.

6. Ask the Model to State Uncertainty

Prompt:

“If unsure, say you are unsure.”

7. Compare Multiple Answers

Useful for sensitive workflows.

Hallucinations vs lying

These are different.

| Term | Meaning |

| Hallucination | Incorrect output due to prediction errors |

| Lying | Intentional deception |

LLMs do not “intend” deception like humans.

Hallucinations vs Bias

Bias means unfair or skewed patterns.

Hallucination means fabricated or false information.

A model can have one, both, or neither in a specific response.

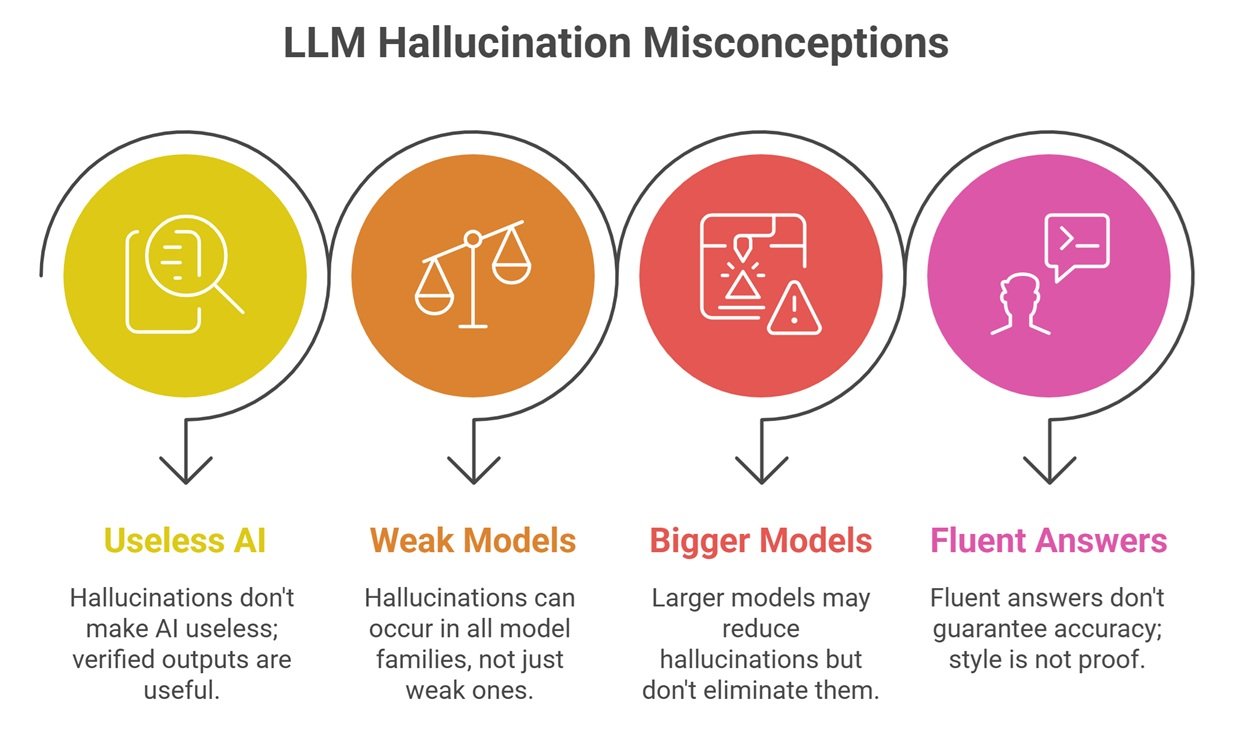

Why LLMs Hallucinate: Common Misconceptions

Hallucinations mean AI is useless

No. Many outputs are highly useful when verified.

Only weak models hallucinate

All model families can hallucinate.

Bigger models never hallucinate

Larger models may improve, but not eliminate the issue.

Fluent answers are accurate

Style is not proof.

Best use cases despite hallucination risk

LLMs still excel at:

- brainstorming

- drafting

- summarization with review

- coding assistance with testing

- customer support with guardrails

- search copilots with citations

Future of hallucination reduction

Expect progress in:

- retrieval-first AI systems

- better uncertainty estimation

- stronger reasoning checks

- tool-using AI agents

- automatic fact verification

- domain-specific trusted models

Hallucinations may reduce, but likely not disappear fully.

Suggested Read:

- How LLMs Work

- LLM for Beginners

- Domain Specific Language Models

- LLM Fine Tuning Basics

- Multimodal LLMs

- Prompt Engineering Explained Simply

FAQ: Why LLMs Hallucinate

Why do LLMs hallucinate?

Because they predict likely text patterns rather than guaranteed truth.

Can hallucinations be removed completely?

Probably not completely, but they can be reduced.

Are hallucinations dangerous?

They can be in high-stakes use cases.

Do all AI models hallucinate?

Yes, to varying degrees.

What is the best defense?

Good prompting, retrieval systems, and human review.

Final takeaway

LLM hallucinations happen because language models generate plausible answers, not perfect truth. They are powerful tools—but not infallible sources.

Use AI for speed and productivity, but apply verification where accuracy matters most.