LLM Context Window Explained: What It Means and Why It Matters

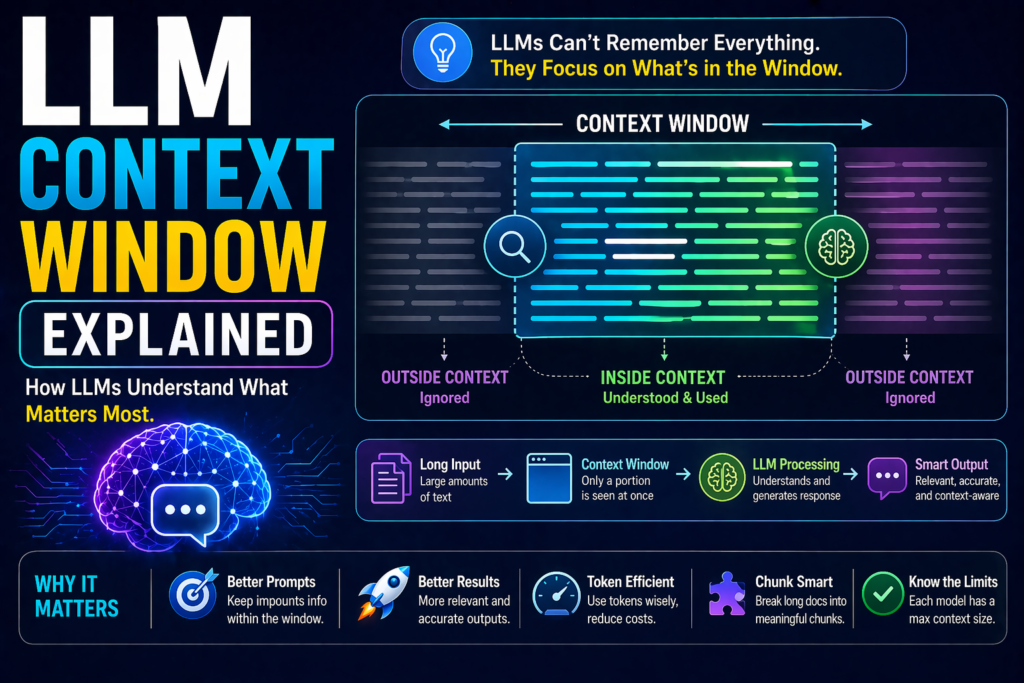

When using AI tools, you may hear terms like context window, token limit, or long-context models. These terms are important because they directly affect how much information an AI model can process at one time.

If you have ever wondered why an AI forgets earlier parts of a conversation or struggles with very large documents, the context window is often the reason.

This guide explains LLM context windows in simple language for beginners and business users.

In simple terms

A context window is:

The maximum amount of text an LLM can consider at one time while generating a response.

That text may include:

- your current prompt

- previous chat messages

- uploaded documents

- system instructions

- tool outputs

- the model’s current draft response

Think of it like the model’s working memory during a task.

Why context windows matter

A larger context window can help AI:

- remember more of the conversation

- analyze longer documents

- compare multiple sources

- write long structured outputs

- maintain consistency across tasks

- support complex workflows

A smaller context window means older or excess information may be dropped.

Simple analogy

Imagine reading a book through a small window.

If the window is tiny, you only see a paragraph.

If the window is large, you can see many pages.

That is similar to how LLMs handle information.

What goes inside a context window?

Many users think only the prompt counts. In reality, several inputs may use space.

1. User Prompt

Your question or instruction.

2. Conversation History

Earlier messages in the chat.

3. System Instructions

Rules guiding model behavior.

4. Uploaded Text

Documents, notes, pasted content.

5. Generated Response

The answer also uses tokens.

All of these compete for limited capacity.

What are tokens?

LLMs usually measure context windows in tokens, not words.

Tokens may be:

- full words

- parts of words

- punctuation

- numbers

- symbols

Example:

A 1,000-word article may use more than 1,000 tokens depending on language and formatting.

That is why token limits matter.

How context windows affect output quality

Better Memory

Larger windows help models remember earlier details.

Stronger Document Analysis

Useful for PDFs, reports, contracts, research.

Fewer Repeated Questions

The model can retain prior context longer.

More Complex Workflows

Helpful for coding, planning, and multi-step tasks.

What happens when you exceed the context window?

If too much text is included, the system may:

- trim older messages

- summarize previous context

- ignore less relevant text

- reduce response quality

- forget earlier instructions

“This is why long chats sometimes drift in LLM context window explained scenarios.”

LLM Context window (Real use cases)

Small Context Need

Prompt:

“Write five email subject lines.”

Needs little memory.

Medium Context Need

Prompt:

“Summarize this 10-page report.”

Needs moderate context.

Large Context Need

Prompt:

“Compare these three contracts and identify conflicting clauses.”

Needs large context.

Context window vs memory

These terms are often confused.

Context Window

Temporary information used in the current task.

Memory

Saved preferences or persistent information across sessions (when supported by systems).

A context window is not permanent memory.

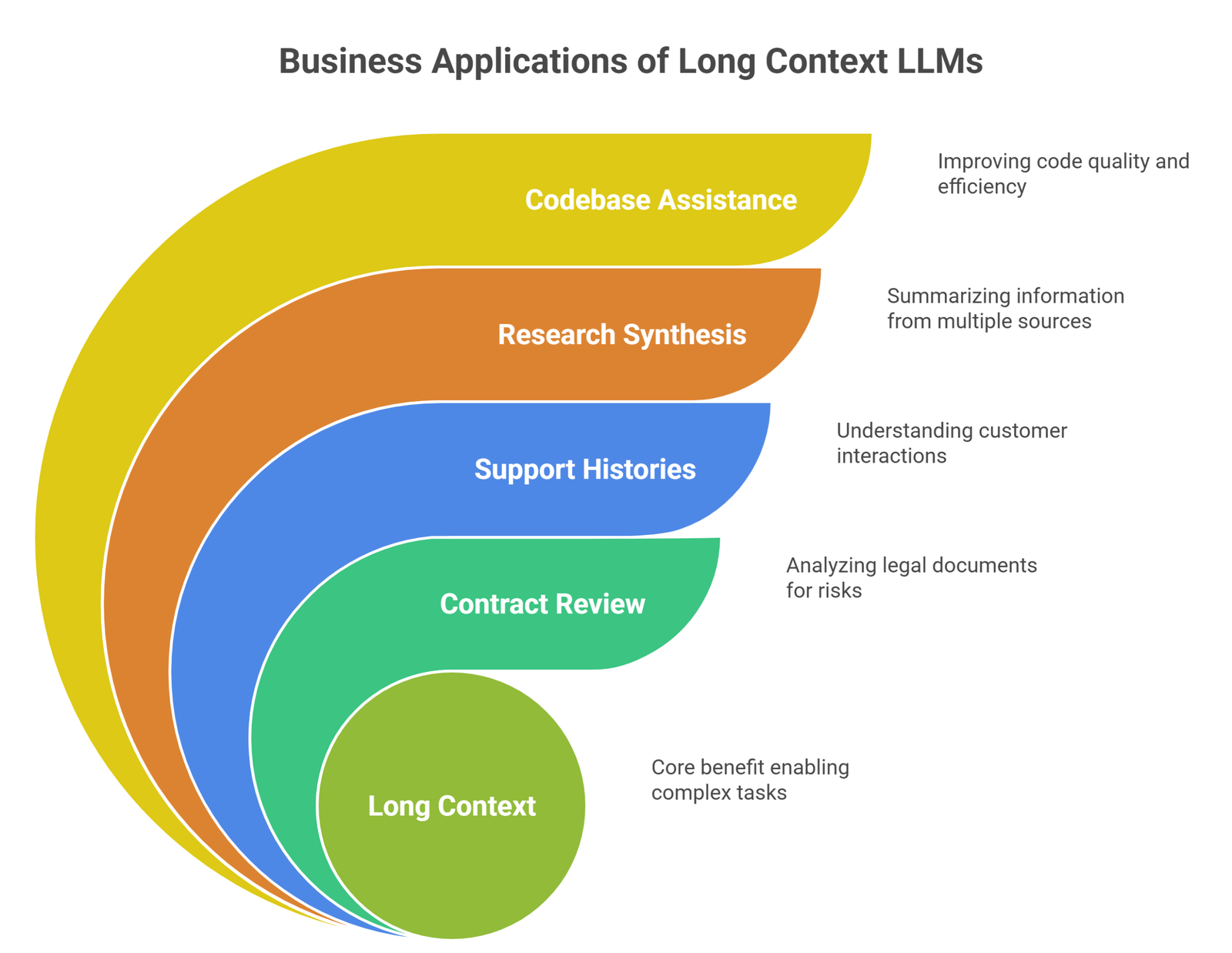

Why businesses care about long context

Companies use LLMs for:

- contract review

- support histories

- research synthesis

- internal knowledge search

- codebase assistance

- meeting transcript analysis

These tasks benefit from larger context windows.

Popular AI providers and long-context trends

Many companies work on context improvements, including:

Longer context is now a major competition area.

How to work better within context limits

1. Use clear prompts

Avoid unnecessary text.

2. Summarize long history

Replace long chats with concise summaries.

3. Chunk large documents

Send sections one at a time.

4. Prioritize relevant info

Only include what matters.

5. Ask structured questions

Focused prompts use space better.

Context window vs model intelligence

Bigger context does not always mean smarter reasoning.

A model may have:

- large context but average reasoning

- smaller context but stronger reasoning

Both capabilities matter.

Common beginner mistakes

- assuming AI remembers everything forever

- pasting irrelevant long text

- confusing words with tokens

- ignoring that responses also use tokens

- expecting perfect long-document recall

Suggested Read:

- LLM for Beginners

- How LLMs Work

- LLM Explained Simply

- LLM Training vs Inference

- Foundation Models vs LLMs

- Prompt Engineering Explained Simply

FAQ: LLM Context Window Explained

What is an LLM context window?

The amount of text the model can process at one time.

Is context window the same as memory?

No. It is temporary working context.

Why does AI forget earlier messages?

Older content may be removed when limits are reached.

Do bigger context windows help?

Yes, especially for long tasks and document analysis.

Should I always use maximum context?

Not necessarily. Relevant focused input often works best.

Final takeaway

LLM context windows determine how much information AI can consider during a task. They affect memory, long-document handling, and conversation quality.

If you understand context windows, you can prompt smarter, structure inputs better, and get stronger results from AI tools.