Best Open Source LLMs in 2026: Top Models Compared for Real Use Cases

Open source LLMs have transformed the AI market. Instead of relying only on paid closed APIs, developers and businesses can now run powerful language models privately, customize them, and reduce long-term costs.

That is why searches for open source LLMs continue to grow.

But not all open models are equal. Some are better for coding, some for local deployment, some for enterprise privacy, and others for cost-efficient chat applications.

This guide compares the best open source LLMs in 2026 so you can choose the right model for your goals.

In simple terms

Open source LLMs usually refer to language models where weights, usage access, or development frameworks are openly available.

They are popular because they offer:

- lower long-term cost

- private deployment

- customization

- local usage

- vendor independence

- experimentation freedom

For many teams, they are a serious alternative to closed AI APIs.

How We Evaluated Top Open Source LLMs

We compared models using practical criteria:

- output quality

- reasoning ability

- coding performance

- deployment ease

- hardware efficiency

- community adoption

- documentation

- ecosystem momentum

Best Open Source LLMs (Quick List)

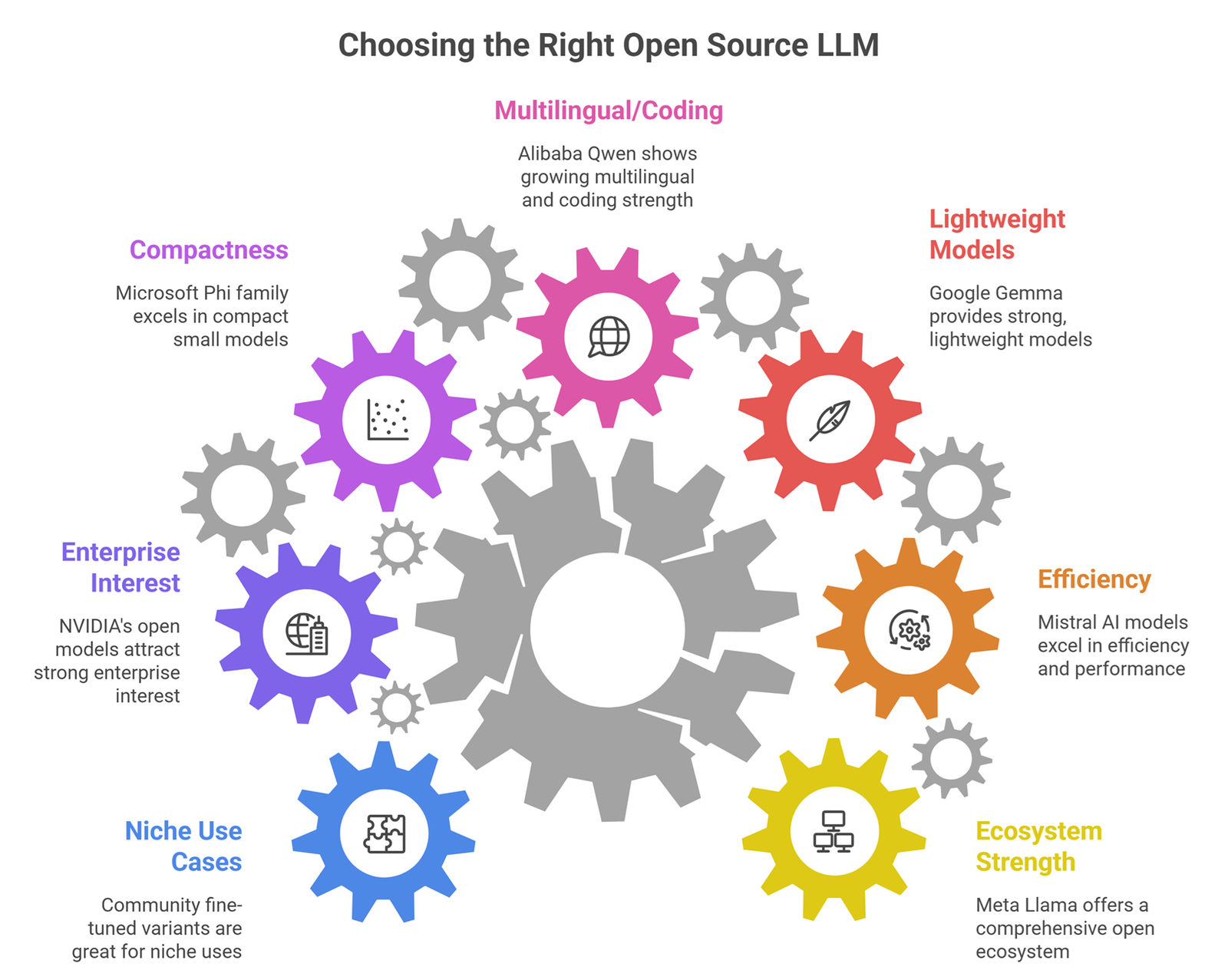

- Meta Llama ecosystem – Best all-around open ecosystem

- Mistral AI models – Excellent efficiency and strong performance

- Google Gemma ecosystem – Strong lightweight models

- Alibaba Group Qwen ecosystem – Growing multilingual and coding strength

- Microsoft Phi family – Compact small model excellence

- NVIDIA open model ecosystems – Strong enterprise interest

- Community fine-tuned variants – Great for niche use cases

Detailed comparison

| Model Ecosystem | Best For | Strengths | Considerations |

| Llama | Best overall | Huge community, versatile | Hardware needs vary |

| Mistral | Efficient deployment | Strong quality per size | Smaller ecosystem than Llama |

| Gemma | Lightweight workloads | Good local experimentation | Newer ecosystem depth |

| Qwen | Multilingual + coding | Rapid improvement | Regional tooling variance |

| Phi | Small devices | Excellent compact models | Smaller context/capability vs giants |

| NVIDIA ecosystems | Enterprise stacks | Infra synergy | Depends on deployment setup |

Best Open Source LLM by Use Case

Best Overall

Meta Llama models remain popular due to community adoption, tooling, and broad versatility.

Best for Efficiency

Mistral AI models are often praised for strong performance relative to size.

Best for Small Devices

Microsoft Phi models are attractive for lightweight deployments.

Best for Multilingual Needs

Alibaba Group Qwen models are widely discussed for multilingual capability.

Best for Google Ecosystem Builders

Google Gemma models fit experimentation and ecosystem familiarity.

Why businesses choose open source LLMs

1. Privacy Control

Run models internally without sending sensitive data externally.

2. Lower Long-Term Costs

At scale, self-hosting may outperform API spend.

3. Customization

Fine-tune models for domain workflows.

4. Vendor Independence

Avoid relying on one provider.

5. Product Differentiation

Build unique AI systems.

Why some teams still choose closed models

Open models are powerful, but hosted systems may offer:

- easier setup

- premium reasoning quality

- enterprise support

- managed uptime

- faster launch speed

Many companies use hybrid strategies.

The Best Local LLM for Coding 2026

For software engineers, running a localized coding assistant ensures that proprietary source code never leaves the local machine. Selecting the best local coding llm depends entirely on your target programming language and your machine’s unified memory bandwidth.

Specialized programming architectures feature deep tokenizers trained explicitly on raw repositories. When setting up an offline environment, developers rank models based on context window longevity and multi-file code refactoring capabilities. Running these models via native environments allows a lightweight local llm to act as a zero-latency autocomplete partner, making it the premier best local llm for coding configuration for fast-paced, secure software development.

Open Source LLMs for Coding

Popular choices often include:

- Llama ecosystem variants

- Mistral-family options

- Qwen coding-focused variants

- community code fine-tunes

Best results depend on prompt quality and tooling.

Open source LLMs for local laptops

Smaller models or quantized versions are usually best.

Look for:

- efficient memory usage

- fast inference

- 4-bit support

- active community tools

Open Source LLMs vs Closed APIs

| Feature | Open Source LLMs | Closed APIs |

| Control | High | Lower |

| Setup Effort | Higher | Lower |

| Customization | High | Moderate |

| Upfront Complexity | Higher | Lower |

| Ongoing Cost at Scale | Can be lower | Usage-based |

| Launch Speed | Slower | Faster |

Common Mistakes When Choosing Open Source LLMs

Picking by parameter size only

Bigger does not always mean better.

Ignoring hardware cost

Serving can be expensive.

No benchmarking

Always test real workloads.

Weak security setup

Self-hosting needs controls.

Ignoring maintenance time

Open systems need operational effort.

How to choose the right open source LLM

Startup

Use efficient models with low hosting cost.

Enterprise

Prioritize privacy, governance, support.

Solo Builder

Use lightweight local models.

Researcher

Choose flexible ecosystems.

Developer Team

Prioritize coding benchmarks and integrations.

Future of open source LLMs

Expect rapid growth in:

- stronger small models

- cheaper inference

- enterprise-ready stacks

- better multimodal open models

- local AI devices

- community fine-tuned ecosystems

Open source competition is accelerating innovation.

Suggested Read:

- LLM for Beginners

- Best LLMs for Coding

- Best LLMs for Writing

- LLM Deployment Basics

- LLM Quantization Explained

- SLM vs LLM

FAQ: Open Source LLMs

What are open source LLMs?

Language models with openly available weights, frameworks, or broad access terms.

Are open source LLMs free?

Many are free to access, but hosting infrastructure can cost money.

Which open source LLM is best?

Depends on use case. Llama, Mistral, Gemma, Qwen, and Phi are commonly discussed.

Can open source LLMs replace paid APIs?

For some use cases, yes.

Are they good for business?

Yes, especially for privacy and customization needs.

Final takeaway

Open source LLMs are no longer niche alternatives. They are serious options for startups, developers, and enterprises that need control, privacy, and cost efficiency.

Choose based on your actual workload, hardware budget, and deployment ability—not just popularity.