Context Engineering vs Prompt Engineering: What Changed?

Prompt engineering used to be the main way to control AI outputs. Today, that is changing. Modern AI systems rely less on carefully crafted prompts and more on context engineering—a broader approach that includes memory, tools, retrieval, and structured inputs.

The shift is important because it changes how developers, researchers, and businesses build AI applications. Instead of asking “What prompt should I write?”, the better question now is “What context should I provide?”

In simple terms

- Prompt engineering = how you ask

- Context engineering = what the AI knows when answering

Prompting focuses on wording. Context engineering focuses on information.

What is prompt engineering?

Prompt engineering is the practice of designing inputs (prompts) to guide AI behavior.

Examples include:

- role prompting (“You are a teacher…”)

- few-shot prompting (giving examples)

- structured outputs (“Answer in JSON”)

It works well when:

- tasks are simple

- context is limited

- no external data is needed

This is why early LLM applications relied heavily on prompt techniques.

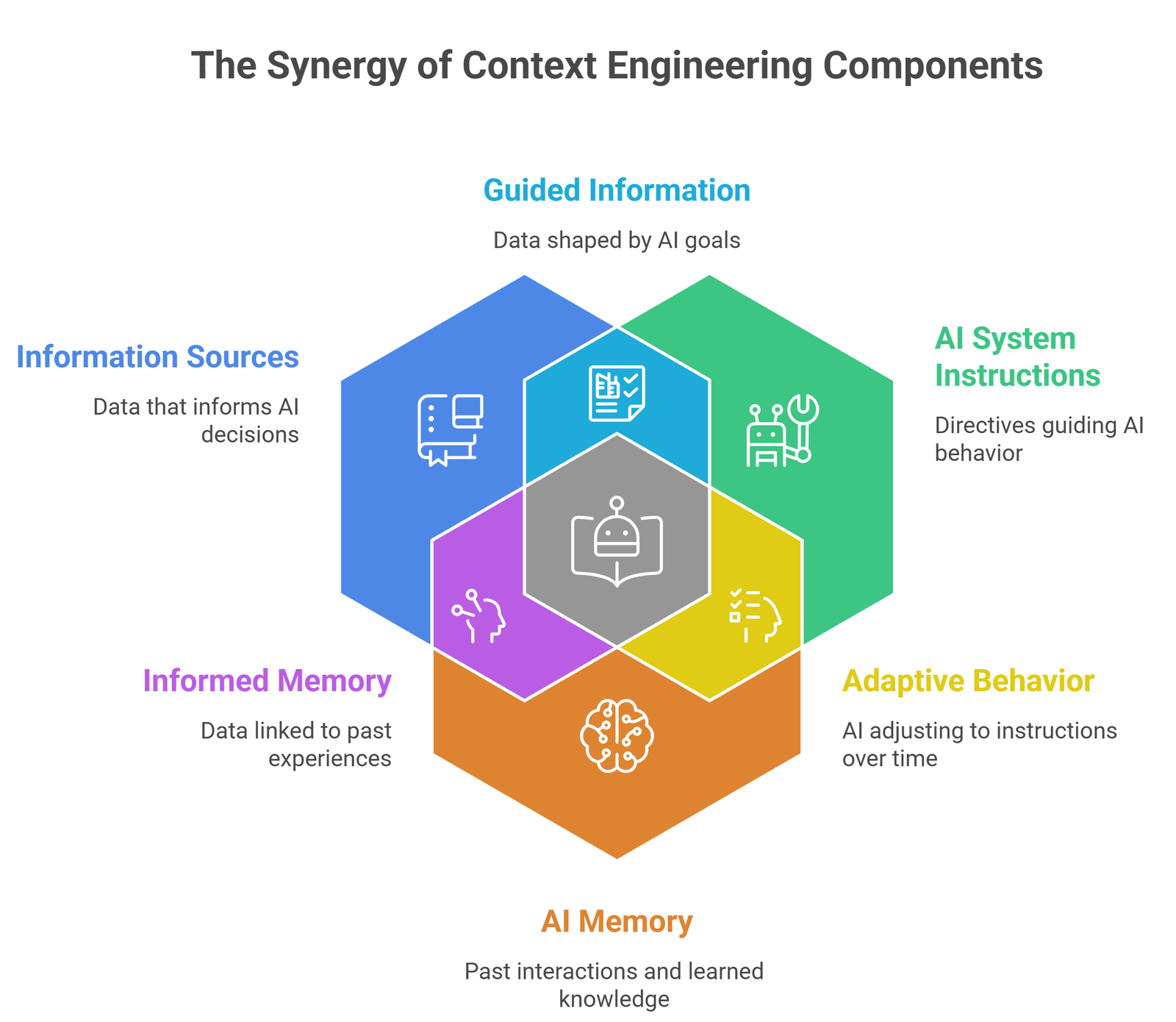

What is context engineering?

Context engineering is the process of designing the full input environment for an AI system.

This includes:

- retrieved documents (RAG)

- conversation history

- system instructions

- tool outputs

- structured data

- memory

Instead of relying only on a prompt, you build a context pipeline that feeds the model everything it needs.

Why the shift happened

1. LLMs got better—but not perfect

Modern models are powerful, but they still:

- hallucinate

- lack real-time knowledge

- struggle with long-term memory

Prompting alone cannot fix these issues.

2. Real-world applications need data

In production systems, AI needs access to:

- company documents

- databases

- APIs

- user history

This requires retrieval and structured context, not just prompts.

3. RAG and agents changed everything

With the rise of RAG systems and AI agents:

- models retrieve data before answering

- tools execute tasks

- memory persists across sessions

This makes context more important than prompt wording.

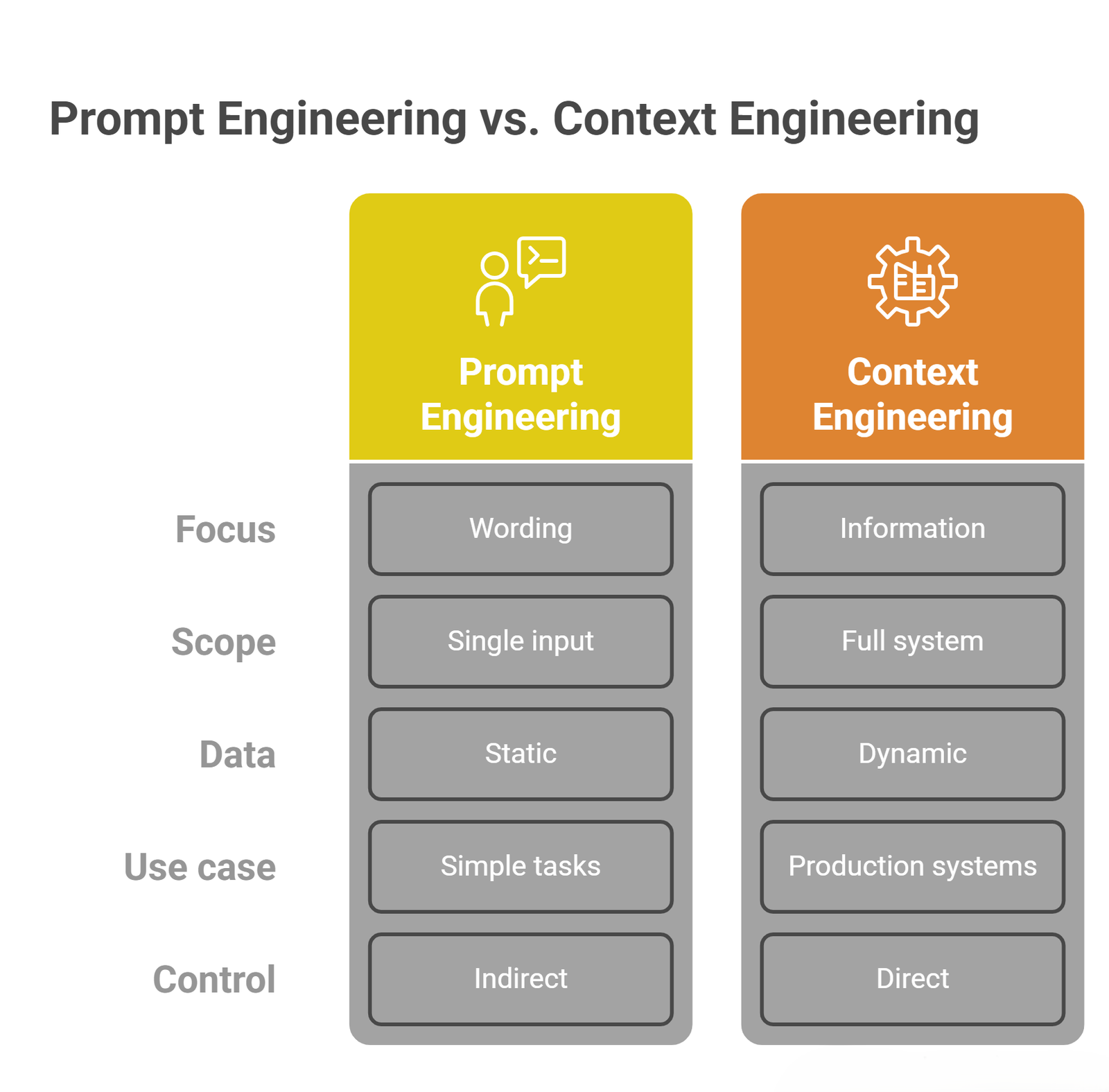

Key differences

| Aspect | Prompt Engineering | Context Engineering |

| Focus | Wording | Information |

| Scope | Single input | Full system |

| Data | Static | Dynamic |

| Use case | Simple tasks | Production systems |

| Control | Indirect | Direct |

This shift explains why many modern AI systems feel more reliable—they are not just “better prompted,” they are better informed.

Example: Same task, different approach

Prompt engineering approach

“Summarize this company report.”

Problem:

- limited context

- generic output

Context engineering approach

System provides:

- report chunks (RAG)

- company metadata

- summary format

- previous summaries

Result:

- more accurate

- more relevant

- more consistent

Where prompt engineering still matters

Prompt engineering is not obsolete. It is still useful for:

- structuring outputs

- guiding tone and style

- controlling format

- quick tasks

But it is no longer enough on its own.

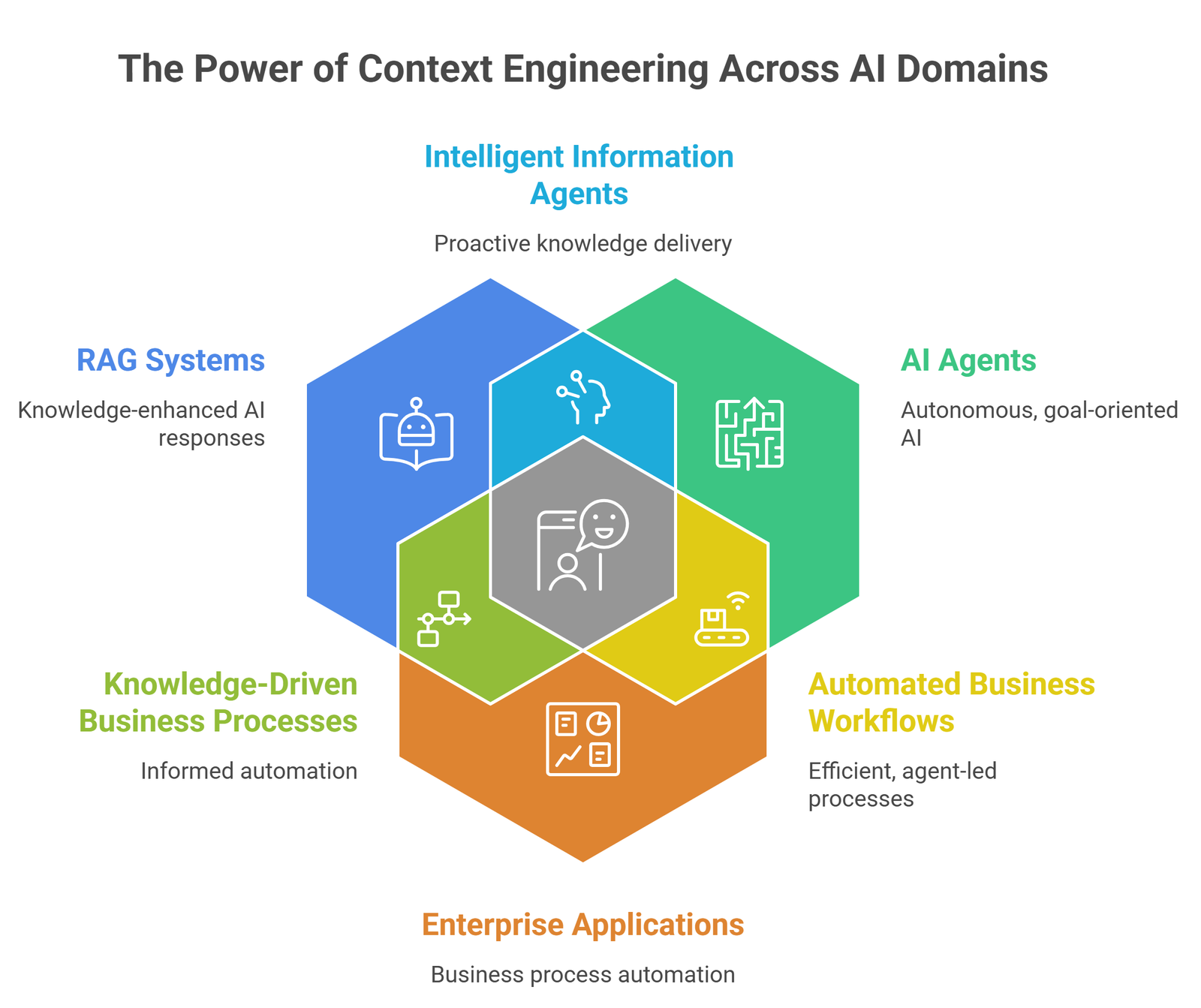

Where context engineering dominates

Context engineering is essential for:

- RAG systems

- AI agents

- enterprise applications

- personalized AI systems

- long-running workflows

In these systems, prompt quality matters less than context quality.

How modern AI systems combine both

The most effective systems use both:

- prompt = instruction layer

- context = knowledge layer

Think of prompts as the “question” and context as the “background knowledge.”

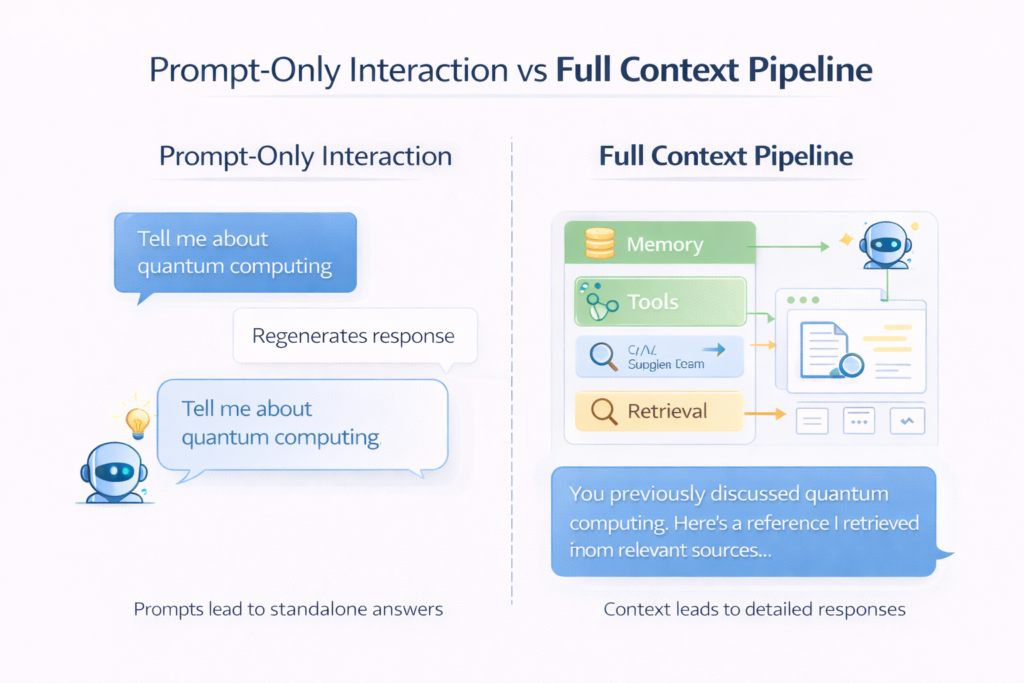

Practical workflow shift

Old approach:

Prompt → Model → Output

New approach:

Data → Retrieval → Context → Prompt → Model → Output

This pipeline explains why developers now focus more on architecture than prompt tricks.

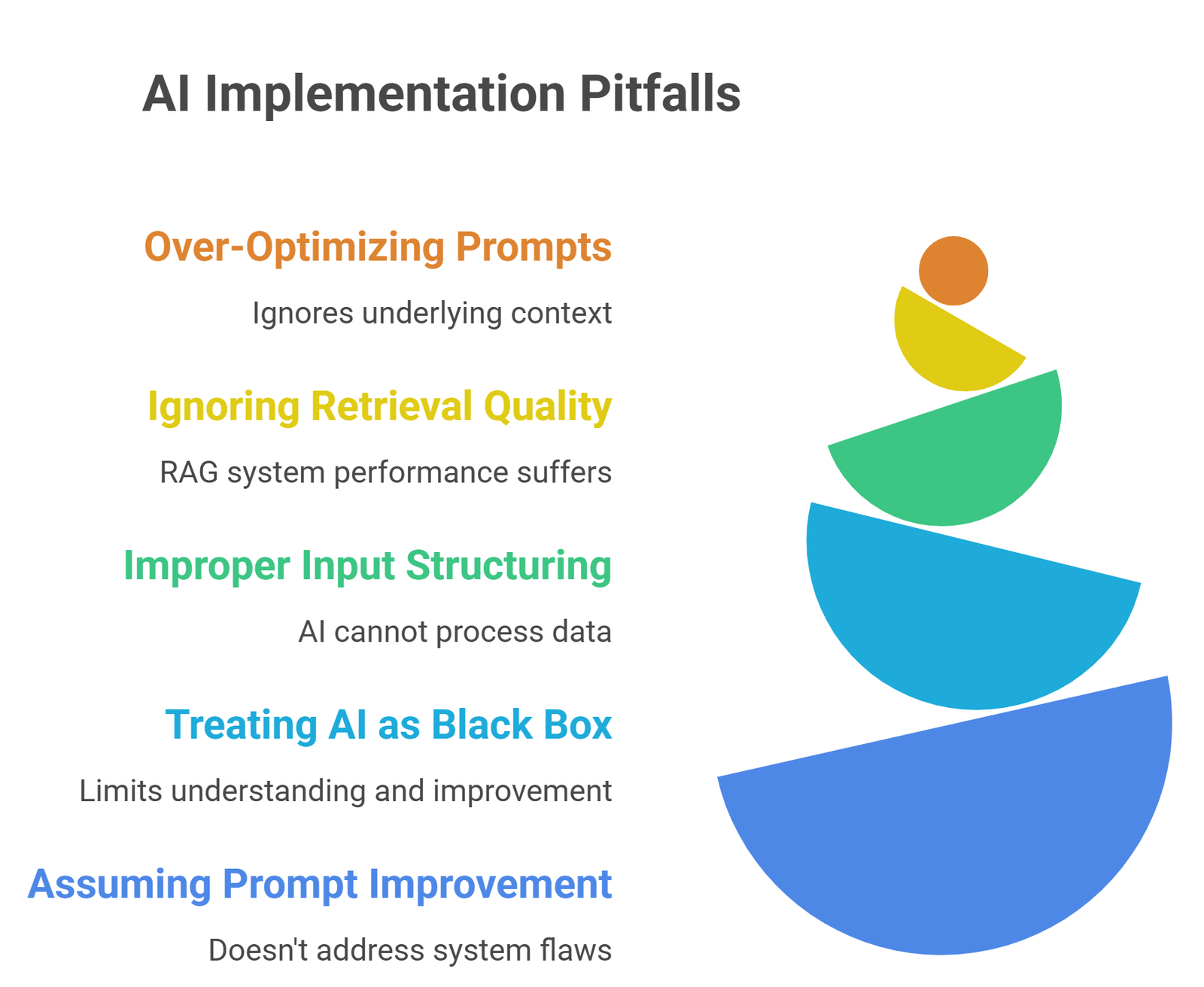

Common mistakes

- over-optimizing prompts without fixing context

- ignoring retrieval quality in RAG

- not structuring inputs properly

- treating AI like a black box

- assuming better prompts = better system

Most performance issues today are context problems, not prompt problems.

Suggested Read:

- Prompt Engineering for Beginners: A Practical Guide

- 25 Prompt Engineering Techniques With Examples

- Zero-Shot vs Few-Shot Prompting Explained

- What Is RAG in AI? A Beginner-Friendly Guide

- How RAG Systems Work in Practice

- AI Agent Architecture Explained Simply

FAQ: Context Engineering vs Prompt Engineering

Is prompt engineering becoming obsolete?

No, but it is becoming one part of a larger system.

What is more important: prompt or context?

Context is usually more important in real-world applications.

Do I still need prompt engineering?

Yes, especially for formatting, tone, and structure.

What is the future of AI development?

Moving from prompt-centric to context-centric systems.

Final takeaway

Prompt engineering helped unlock the power of LLMs. Context engineering is what makes them useful in real applications.

If you want better AI outputs today, focus less on perfect prompts and more on building the right context pipeline.