LLM Latency Optimization: Speed Up AI Responses Fast

LLM Latency Optimization: 15 Ways to Speed Up AI Responses Users love AI tools that feel instant. They dislike waiting several seconds for every answer.

LLM Latency Optimization: 15 Ways to Speed Up AI Responses Users love AI tools that feel instant. They dislike waiting several seconds for every answer.

LLM Serving Explained: How AI Models Reach Real Users Large Language Models (LLMs) can answer questions, generate code, summarize documents, and power AI assistants. But

LLM Fine Tuning Basics: Beginner Guide to Customizing AI Models Large Language Models (LLMs) can already write content, answer questions, summarize text, and generate code.

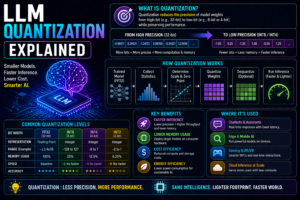

LLM Quantization Explained: What It Is and Why It Matters Large Language Models (LLMs) are powerful, but they can also be expensive to run. Bigger

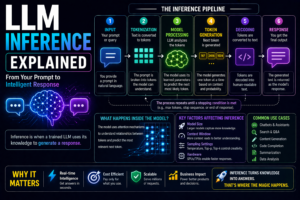

LLM Inference Explained: What It Means and How AI Generates Answers Large Language Models (LLMs) can answer questions, write content, summarize documents, and generate code

LLM Token Limits Explained: What They Mean and Why They Matter When using AI tools, you may hear terms like tokens, token limits, context size,