LLM Observability Explained: How to Monitor AI Systems in 2026

Building an AI application is only the first step. Once a Large Language Model (LLM) goes live, teams need to know what is happening in production.

Questions quickly appear:

- Why did quality drop today?

- Why are costs increasing?

- Which prompts fail most often?

- Why are users abandoning sessions?

- Are hallucinations rising?

That is where LLM observability becomes essential.

This guide explains LLM observability in simple language, why it matters, key metrics to track, and how teams use it to improve AI systems.

In simple terms

LLM observability means:

Monitoring, measuring, and understanding how an AI system behaves in real-world usage.

It helps teams track:

- prompts

- outputs

- latency

- token usage

- cost

- errors

- hallucinations

- user satisfaction

Think of it as analytics + monitoring for language models.

Why LLM Observability Matters

Traditional software monitoring tracks servers, uptime, and bugs.

AI systems need much more.

An LLM app may technically be online but still fail because:

- responses are low quality

- prompts are confusing

- costs spike

- users dislike answers

- retrieval systems break

- model updates reduce performance

Observability helps catch these issues early.

Easy analogy

Imagine running a restaurant.

Knowing the lights are on is not enough.

You also need to know:

- wait times

- food quality

- customer complaints

- ingredient cost

- repeat customers

That is observability for a restaurant.

Same idea for LLM products.

Core LLM Observability Metrics

1. Latency

How long responses take.

Includes:

- time to first token

- total completion time

- average response time

Critical for user experience.

2. Token Usage

Tracks prompt and output token volume.

Useful for cost control.

3. Cost Per Request

How expensive each interaction becomes.

Especially important for scaled products.

4. Error Rate

Measures:

- failed calls

- timeouts

- tool errors

- retrieval failures

5. Hallucination Signals

Detect suspicious or unsupported outputs.

6. User Satisfaction

Thumbs up/down, ratings, retention, repeat use.

7. Prompt Success Rate

Which prompts consistently deliver good outcomes.

AI ecosystems teams monitor

Many companies deploy systems using providers such as:

Regardless of provider, observability remains important.

What LLM observability tools often capture

Prompt Logs

What users asked.

Response Logs

What the model answered.

Metadata

Model version, latency, tokens.

Session Flows

Multi-step user journeys.

Alerts

Sudden failures or cost spikes.

Quality Signals

Human ratings or automated scoring.

LLM Observability vs Traditional Monitoring

| Feature | Traditional Monitoring | LLM Observability |

| Server Uptime | Yes | Yes |

| Error Logs | Yes | Yes |

| Prompt Tracking | No | Yes |

| Output Quality | Limited | Yes |

| Hallucination Risk | No | Yes |

| Token Cost | No | Yes |

AI systems need a broader lens.

LLM Observability: Real-world use cases

Customer Support Bot

Track:

- resolution rate

- escalation rate

- response quality

- latency

Internal Search Assistant

Track:

- retrieval success

- grounded answers

- citation quality

Writing Tool

Track:

- user satisfaction

- output usefulness

- regeneration frequency

Coding Assistant

Track:

- accepted suggestions

- bug rate

- completion speed

Common problems observability helps solve

Rising Costs

Long prompts or verbose outputs.

Slow Performance

Latency from model or tools.

Hallucination Spikes

After prompt or model changes.

Prompt Drift

Old prompts stop performing well.

Low Adoption

Users abandon confusing experiences.

How to Build LLM Observability

Step 1: Define Success Metrics

Speed, quality, cost, trust.

Step 2: Log Interactions Safely

Respect privacy and compliance.

Step 3: Add Dashboards

Make trends visible.

Step 4: Create Alerts

Notify on anomalies.

Step 5: Review Weekly

Continuous improvement matters.

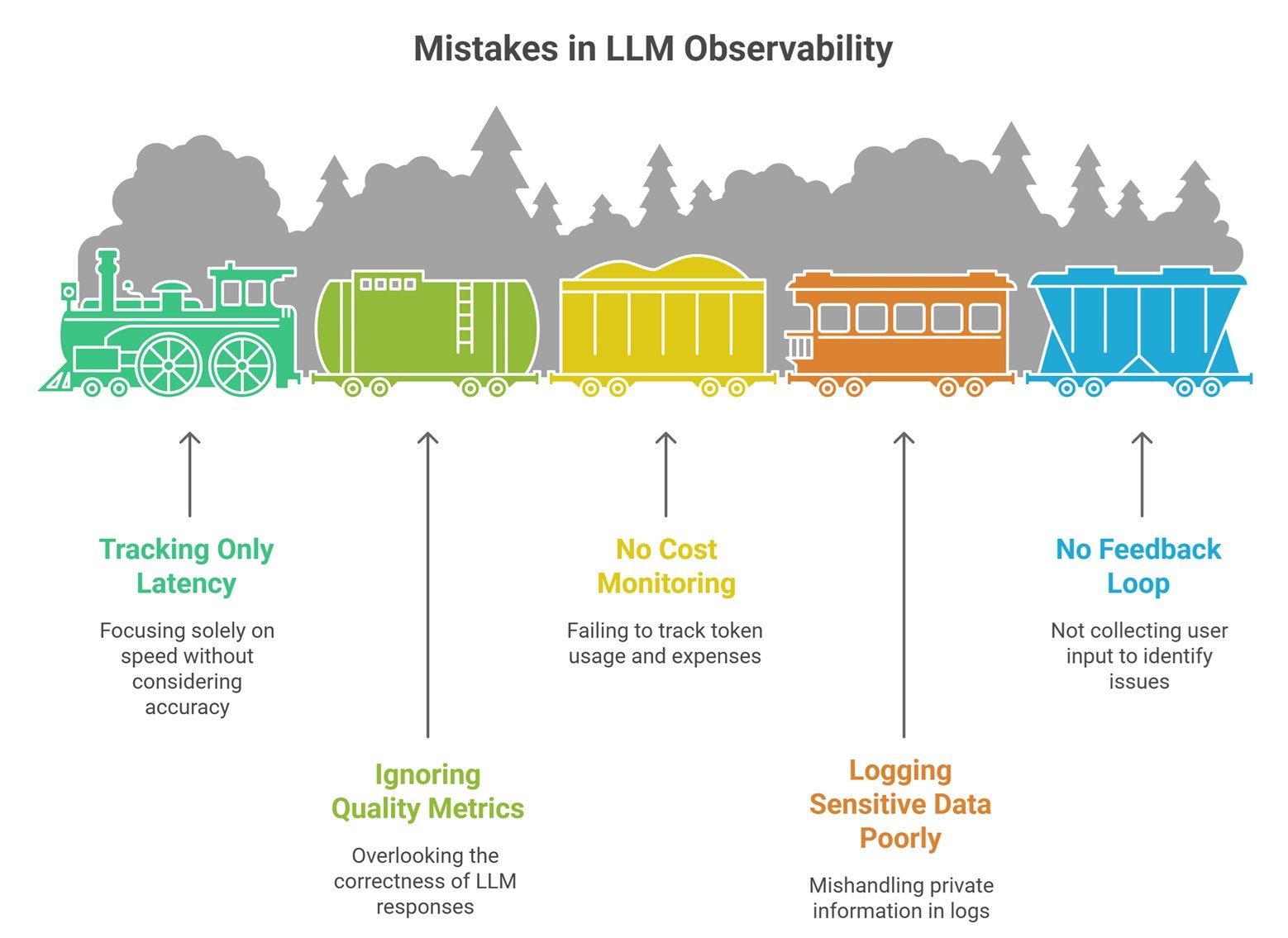

LLM Observability: Common Mistakes Teams Make

Tracking Only Latency

Speed is not enough.

Ignoring Quality Metrics

Fast wrong answers still fail.

No Cost Monitoring

Token waste becomes expensive.

Logging Sensitive Data Poorly

Creates privacy risk.

No Feedback Loop

Users reveal real issues.

Best metrics by maturity stage

| Stage | Focus Metrics |

| Prototype | Prompt success, latency |

| Early Launch | Cost, errors, satisfaction |

| Growth | Retention, hallucination, ROI |

| Enterprise | Compliance, reliability, auditability |

Future of LLM observability

Expect rapid growth in:

- automatic hallucination detection

- prompt optimization analytics

- agent workflow tracing

- model routing dashboards

- business ROI measurement

- privacy-safe analytics systems

Observability will become standard AI infrastructure.

Suggested Read:

- LLM Evaluation Metrics

- LLM Benchmarking Explained

- How to Reduce LLM Hallucinations

- LLM API Pricing Comparison

- LLM Safety Basics

- LLM for Beginners

FAQ: LLM Observability

What is LLM observability?

Monitoring and understanding how AI systems behave in production.

Why is it important?

Because AI apps can fail in ways normal software monitoring misses.

What should teams track first?

Latency, cost, errors, and user feedback.

Does observability reduce hallucinations?

It helps detect and reduce them over time.

Is observability only for enterprises?

No. Even small AI apps benefit.

Final takeaway

LLM observability helps teams move from guessing to knowing. It reveals how prompts, models, users, and costs interact in real production environments.

The best AI systems are not only powerful—they are measurable, monitored, and continuously improved.