Why LLMs Hallucinate: Why They Happen and How to Reduce Them

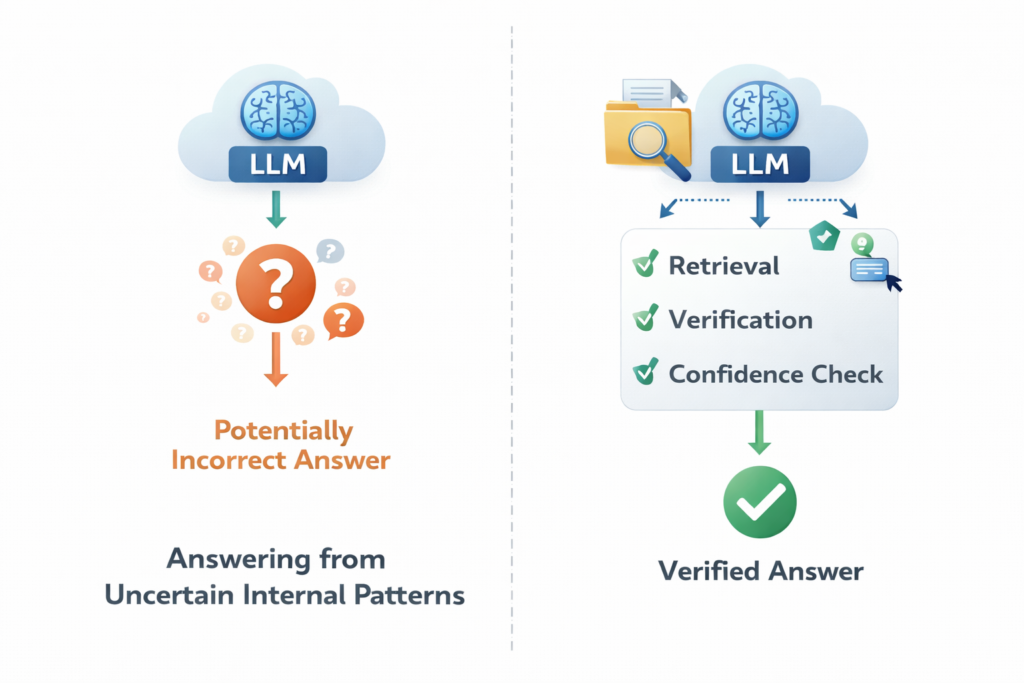

Why LLMs Hallucinate and How to Reduce It LLMs hallucinate because they are trained to predict plausible next tokens, not to guarantee truth. When the model is uncertain, incomplete on the facts, or given weak context, it may still produce a fluent answer instead of saying it does not know. That is why hallucinations can […]

Why LLMs Hallucinate: Why They Happen and How to Reduce Them Read More »