LLM Evaluation Metrics You Should Know

Evaluating large language models (LLMs) is harder than it looks. Unlike traditional software, you cannot measure performance with a single number. Instead, you need a combination of metrics that capture accuracy, fluency, reasoning, and real-world usefulness.

The most important LLM evaluation metrics include perplexity, BLEU, ROUGE, accuracy-based benchmarks, and human evaluation. Each metric measures a different aspect of performance, and no single metric is enough on its own.

In simple terms

LLM evaluation answers one question:

“How good is this model at doing what I need?”

But “good” depends on the task:

- writing → fluency and coherence

- coding → correctness

- QA → accuracy

- chat → usefulness

That’s why multiple metrics exist.

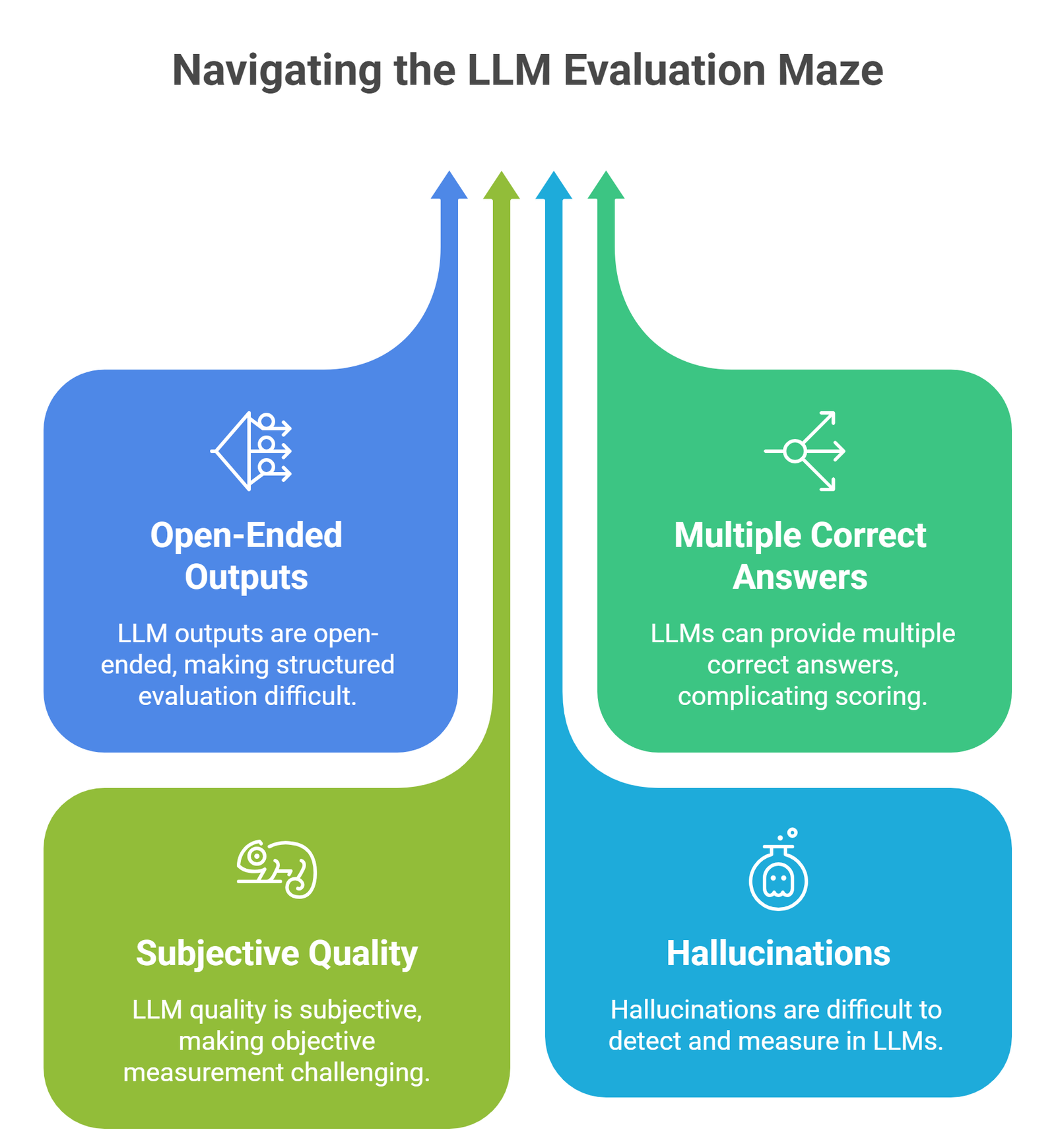

Why LLM evaluation is difficult

Traditional ML models are easier to evaluate because outputs are structured.

LLMs are harder because:

- outputs are open-ended

- multiple answers can be correct

- quality is subjective

- hallucinations are hard to measure

This is why evaluation combines automatic metrics + human judgment + benchmarks.

Core LLM Evaluation Metrics

1. Perplexity

What it measures:

How well a model predicts the next word.

Lower = better

Example:

A model with lower perplexity generates more natural text.

When to use:

- language modeling

- comparing base models

Limitation:

Does not measure correctness or usefulness.

2. BLEU (Bilingual Evaluation Understudy)

What it measures:

Overlap between generated text and reference text.

Best for:

- translation

- structured generation tasks

Example:

Comparing generated translation with a ground-truth sentence.

Limitation:

Fails for creative or flexible outputs.

3. ROUGE (Recall-Oriented Understudy for Gisting Evaluation)

What it measures:

Overlap in summaries (recall-focused).

Best for:

- summarization tasks

Example:

Comparing generated summary with human-written summary.

Limitation:

Does not capture meaning well.

4. Accuracy / Exact Match

What it measures:

Whether the output is exactly correct.

Best for:

- question answering

- classification

- coding tasks

Example:

Did the model return the correct answer?

Limitation:

Too strict for open-ended tasks.

5. F1 Score

What it measures:

Balance between precision and recall.

Best for:

- QA tasks

- entity extraction

Example:

Partial correctness scoring.

6. Human Evaluation

What it measures:

Real human judgment of output quality.

Criteria include:

- usefulness

- coherence

- correctness

- tone

Best for:

- chat systems

- content generation

Limitation:

Expensive and subjective.

7. Hallucination Rate

What it measures:

How often the model generates incorrect facts.

Best for:

- factual QA

- enterprise applications

Example:

Tracking false statements in outputs.

8. Toxicity and Safety Metrics

What it measures:

Harmful or biased content.

Best for:

- public-facing systems

9. Latency and Cost

What it measures:

- response time

- inference cost

Best for:

- production systems

10. Benchmark Scores

Popular benchmarks include:

- MMLU (knowledge tasks)

- HumanEval (coding)

- HELM (holistic evaluation)

Best for:

- comparing models

Limitation:

Benchmarks ≠ real-world performance.

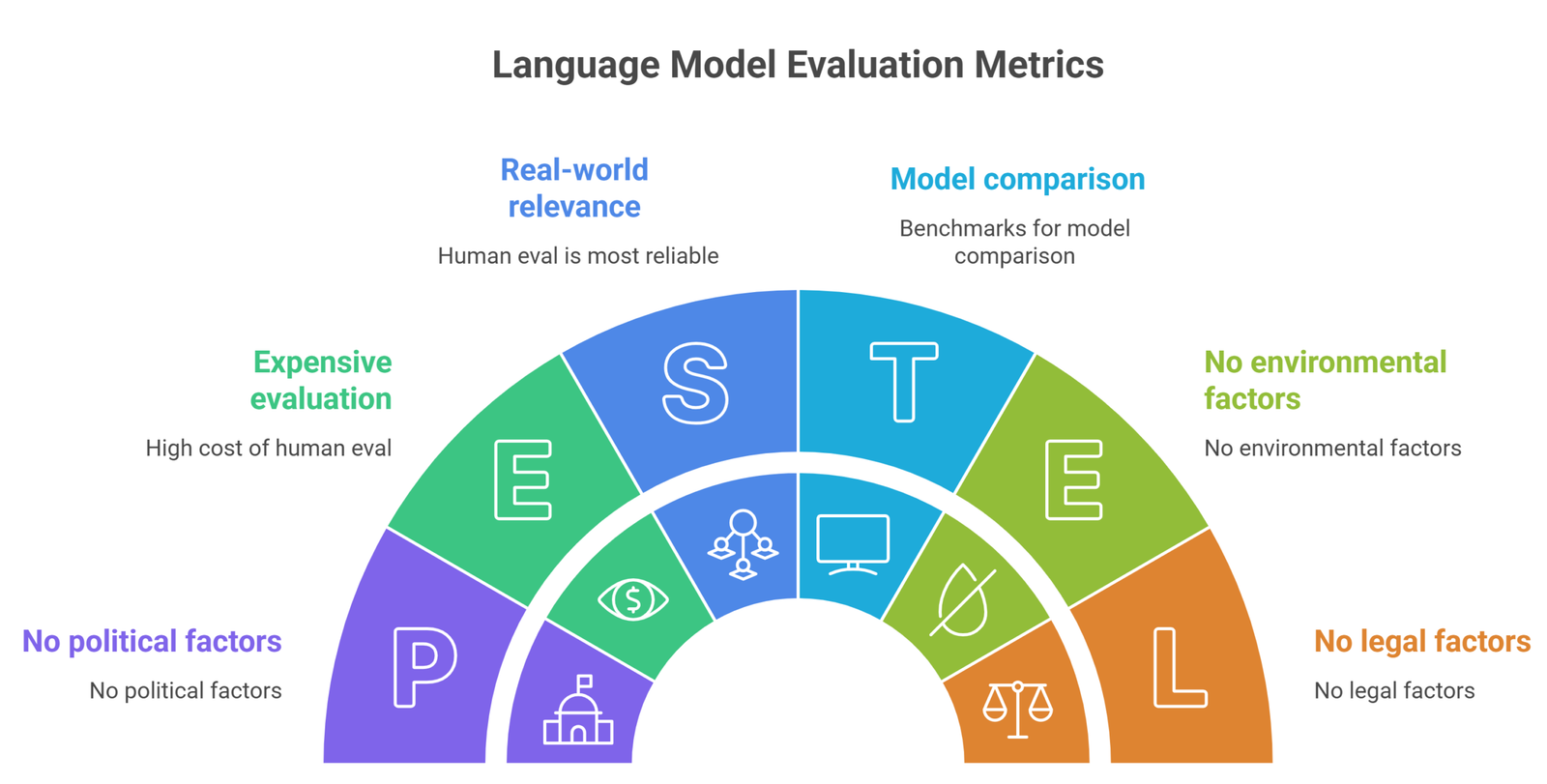

Comparison Table

| Metric | Best for | Strength | Weakness |

| Perplexity | Language modeling | Simple comparison | Not task-specific |

| BLEU | Translation | Easy scoring | Rigid |

| ROUGE | Summarization | Widely used | Shallow |

| Accuracy | QA | Clear results | Too strict |

| Human eval | Real use | Most reliable | Expensive |

| Benchmarks | Model comparison | Standardized | Limited realism |

When to use which metric

| Use case | Best metrics |

| Chatbots | Human eval + hallucination |

| Summarization | ROUGE + human eval |

| Translation | BLEU |

| Coding | Accuracy + benchmarks |

| RAG systems | Retrieval accuracy + hallucination |

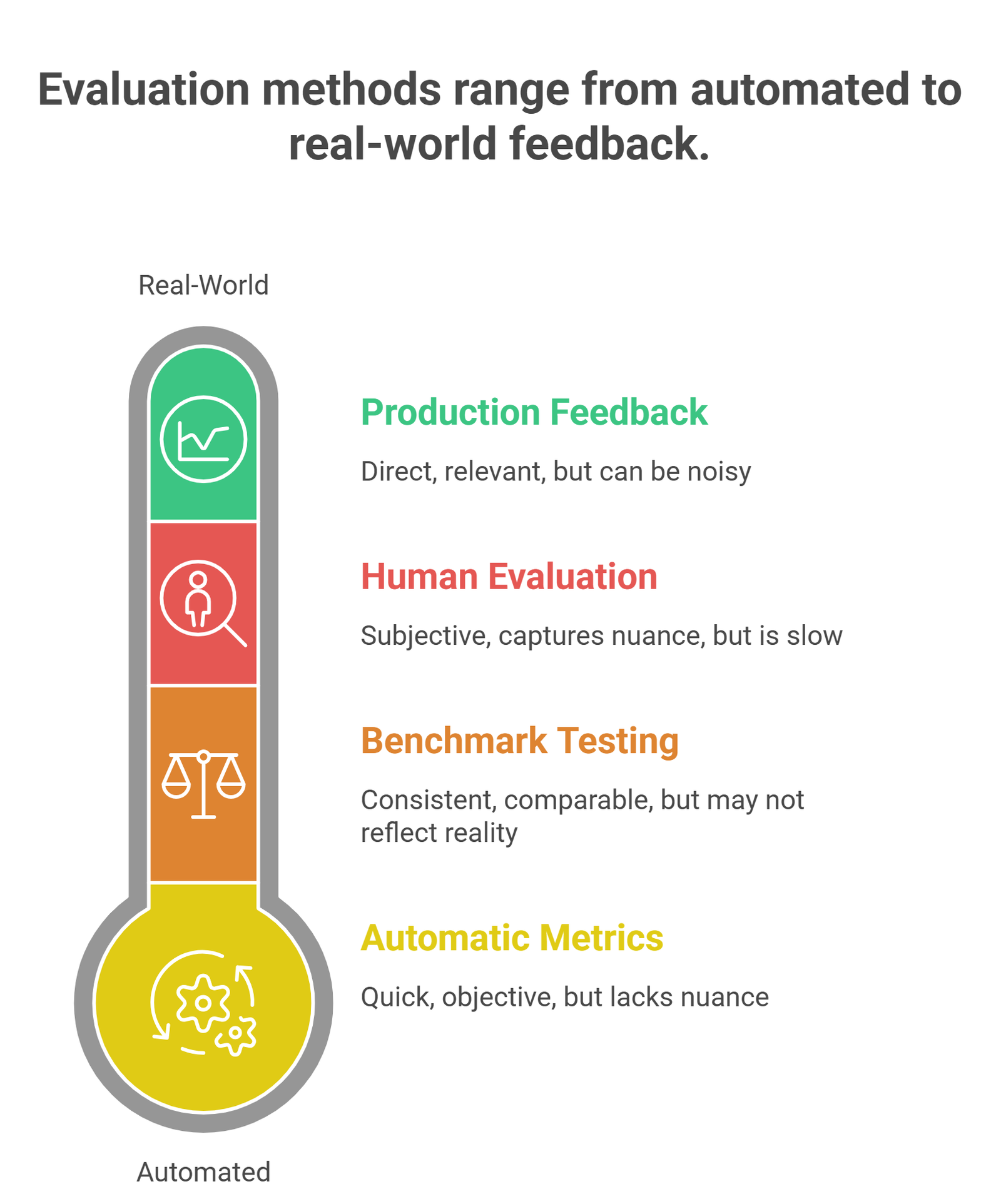

Real-world evaluation strategy

In practice, teams combine metrics:

- automatic metrics (fast)

- benchmark testing (standard)

- human evaluation (quality)

- production feedback (real-world)

This layered approach is what most modern AI systems use.

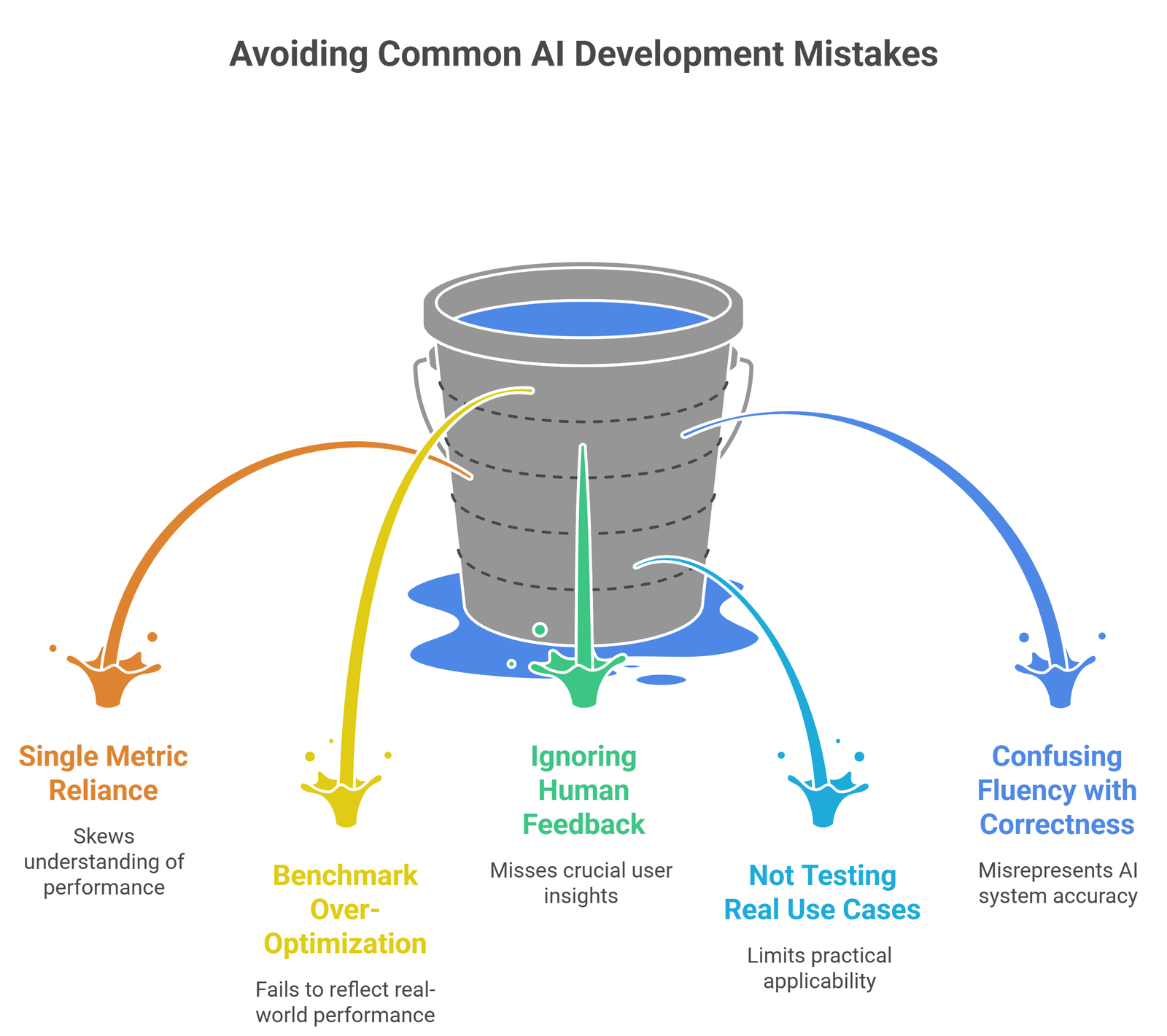

Common mistakes

- relying on a single metric

- over-optimizing benchmarks

- ignoring human feedback

- not testing real use cases

- confusing fluency with correctness

Many top-ranking blogs list metrics, but miss these practical pitfalls.

Suggested Read:

- What Is a Large Language Model? Explained Simply

- How LLMs Work: Tokens, Context, and Inference

- Why LLMs Hallucinate and How to Reduce It

- Open Source LLMs vs Closed Models

- What Is RAG in AI? A Beginner-Friendly Guide

- Best LLMs for Coding in 2026

FAQ: LLM Evaluation Metrics

What is the most important LLM metric?

There is no single best metric. It depends on your use case.

Is perplexity enough?

No. It only measures language modeling, not usefulness.

Why is human evaluation important?

Because many aspects of quality cannot be measured automatically.

Are benchmarks reliable?

They are useful for comparison but not enough for real-world evaluation.

Final takeaway

LLM evaluation is not about finding one perfect score. It is about combining multiple signals to understand performance.

If you want reliable AI systems, focus less on benchmark numbers and more on how the model performs in real-world tasks.