MCP Explained: Why It Matters for AI Agents

MCP stands for Model Context Protocol. It is an open standard designed to connect AI applications to external systems such as tools, data sources, and workflows. In practice, MCP gives AI agents a more standardized way to discover capabilities, access context, and interact with external systems instead of relying on one-off custom integrations for every tool connection.

In simple terms

Think of MCP as a common connection layer for AI agents.

Without MCP, every agent-to-tool connection often needs custom plumbing. With MCP, developers can expose capabilities through MCP servers, and compatible clients can connect to them using a shared protocol. The official docs describe this like a “USB-C port for AI applications,” which is a useful mental model: one standard, many possible connections.

What is MCP?

The Model Context Protocol is an open-source standard for connecting AI applications to external systems. Official documentation says AI apps can use MCP to connect to data sources such as local files or databases, tools such as search engines and calculators, and workflows such as reusable prompts. Anthropic’s original introduction describes MCP as enabling secure, two-way connections between data sources and AI-powered tools.

That matters because modern AI agents are only useful when they can do more than generate text. They often need to search, retrieve context, call software, inspect documents, or follow workflows. MCP helps standardize how those connections happen.

Why MCP matters for AI agents

AI agents usually need three things beyond a language model:

- access to tools

- access to context or data

- a reliable way to interact with external systems

MCP matters because it gives agents a shared protocol for these tasks. The official MCP architecture docs describe core primitives including tools, resources, and prompts, while the SDK docs say developers can build MCP servers that expose these capabilities and MCP clients that connect to any MCP server. That reduces fragmentation and makes it easier to build agents that work across multiple systems.

In simpler terms, MCP helps move AI agents from isolated chat experiences toward connected systems that can actually work with software and information.

How MCP works

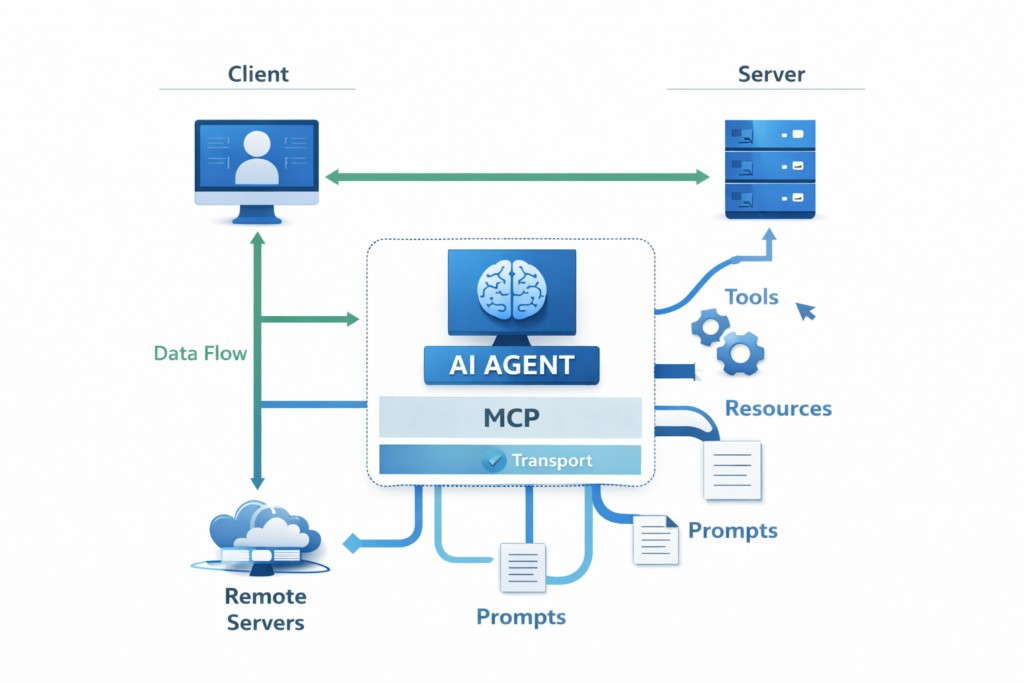

At a high level, MCP works through a client-server model.

- An MCP server exposes capabilities such as tools, resources, or prompts.

- An MCP client connects to that server and uses the exposed capabilities.

- The protocol defines how the client and server communicate. Official docs describe MCP as having a data layer and a transport layer. The data layer covers JSON-RPC-based communication and core primitives, while the transport layer covers how messages are actually exchanged and authorized.

MCP Explained: Core MCP building blocks

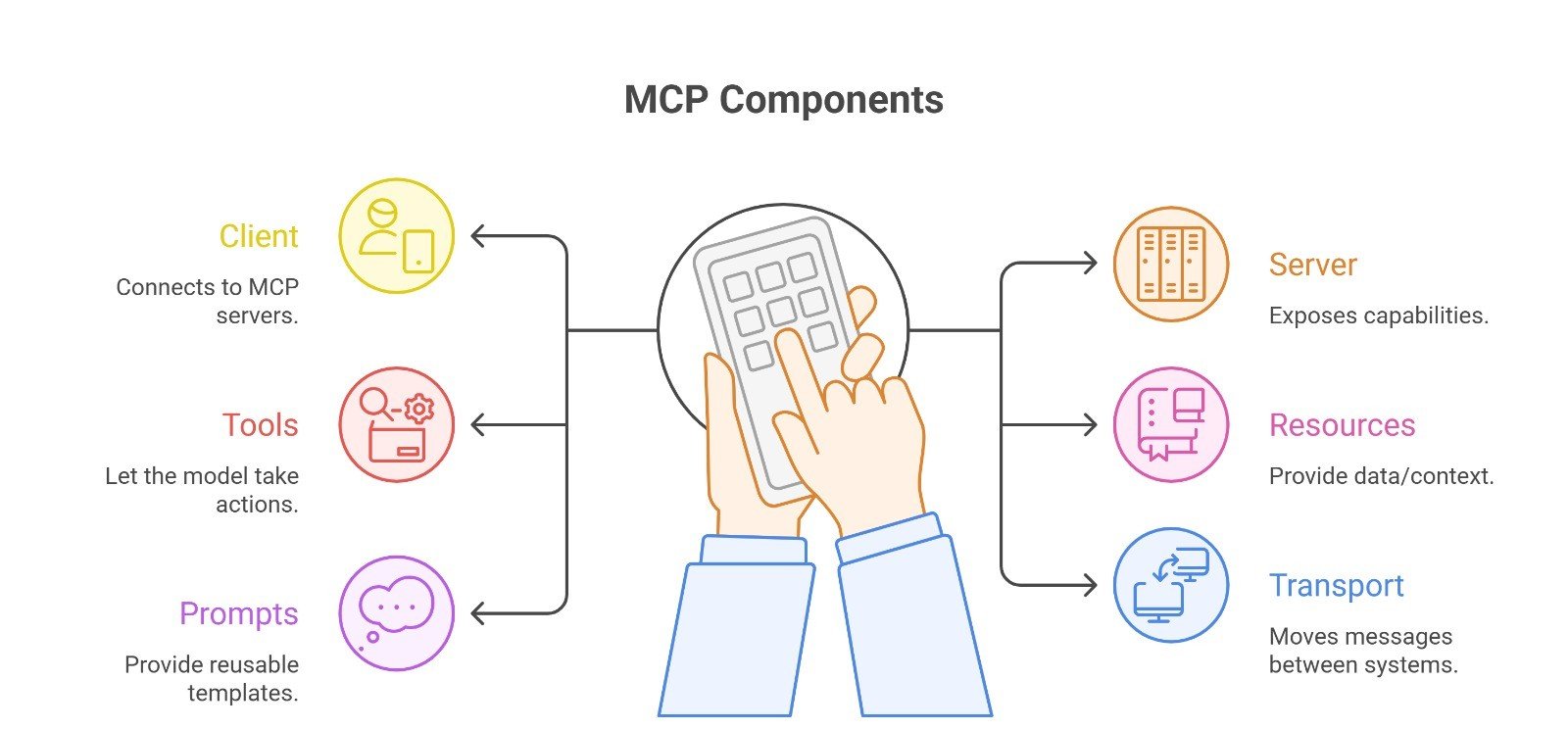

| MCP component | What it does | Simple example |

| Client | Connects to MCP servers | An AI agent app |

| Server | Exposes capabilities | A database connector |

| Tools | Let the model take actions | Search, calculator, API call |

| Resources | Provide data/context | Files, schemas, records |

| Prompts | Provide reusable templates | Domain-specific workflows |

| Transport | Moves messages between systems | Local or remote connection |

These pieces come directly from the official MCP docs and spec pages. Tools are for action, resources are for context, and prompts are reusable prompt templates servers can expose to clients.

MCP vs custom integrations

Without MCP, an AI product often needs a separate custom integration for every external system it wants to connect to. Anthropic’s engineering post on code execution with MCP says this creates fragmentation and duplicated effort. MCP’s value is that developers can implement support once and gain access to a broader ecosystem of integrations.

That does not mean MCP removes all engineering work. Teams still need security, permissions, evaluation, and good workflow design. But MCP can reduce repetitive integration work and make agent architectures cleaner.

Real-world examples of MCP for AI agents

A research agent could use MCP to connect to a document source as a resource and to a search function as a tool. A support agent could connect to internal docs, a ticketing API, and reusable workflow prompts. A coding agent could access repositories, documentation, and code-execution tools through standardized interfaces. These examples are consistent with the official framing of MCP as a way to connect AI apps to data sources, tools, and workflows.

Anthropic also documents an MCP connector for Claude’s Messages API, which shows how vendors are using MCP in agent and tool workflows. Meanwhile, the MCP roadmap and blog show the protocol continuing to evolve around transport, governance, enterprise readiness, and agent communication.

Why MCP is getting more attention now

MCP is getting more attention because agent systems are becoming more connected and more operational. Once an agent needs to access external tools, data, and workflows, standardized integration becomes much more valuable. Official MCP roadmap materials published in March 2026 mention priorities such as transport scalability, governance maturation, and enterprise readiness, which suggests the ecosystem is moving beyond simple demos toward broader production use. That last point is an inference from the roadmap direction, not a guaranteed adoption outcome.

Limitations and risks

MCP is not magic. It helps standardize connections, but it does not automatically make an agent safe, reliable, or well-designed. The current specification explicitly includes security and trust & safety considerations, noting that MCP can enable arbitrary data access and code execution paths. That means permissions, approvals, tool boundaries, and evaluation still matter.

A second limitation is ecosystem maturity. MCP is evolving quickly, with roadmap work around transports, SDK conformance, and newer extensions like MCP Apps. That makes MCP promising, but also means implementers should expect the ecosystem to keep changing.

Suggested Read:

- What Is an AI Agent? A Simple Explanation With Examples

- AI Agents vs Chatbots: Key Differences Explained

- How to Evaluate an AI Agent Before Production

- Prompt Engineering for Beginners: A Practical Guide

- What Is RAG in AI? A Beginner-Friendly Guide

FAQ: MCP Explained

What does MCP stand for?

MCP stands for Model Context Protocol.

What is MCP in simple words?

It is an open standard for connecting AI applications to external systems such as tools, resources, and workflows.

Why does MCP matter for AI agents?

Because agents need a consistent way to access tools and context. MCP helps standardize those connections.

Is MCP only for Anthropic?

No. Anthropic introduced MCP, but the protocol now has its own documentation and governance direction, and Anthropic said in December 2025 that it was donating MCP to the Linux Foundation’s Agentic AI Foundation.

Does MCP make agents safe by default?

No. The spec itself highlights security and trust considerations. MCP standardizes connection patterns, but safety still depends on system design.

Final takeaway

MCP matters because AI agents are only as useful as the systems they can connect to. Model Context Protocol gives developers a shared way to expose tools, resources, and prompts so agents can work with external systems more consistently. The simplest way to think about it is this: MCP does for connected AI workflows what a standard port does for hardware ecosystems. It does not solve every problem, but it makes building agent integrations much more practical.