Open Source LLMs vs Closed Models

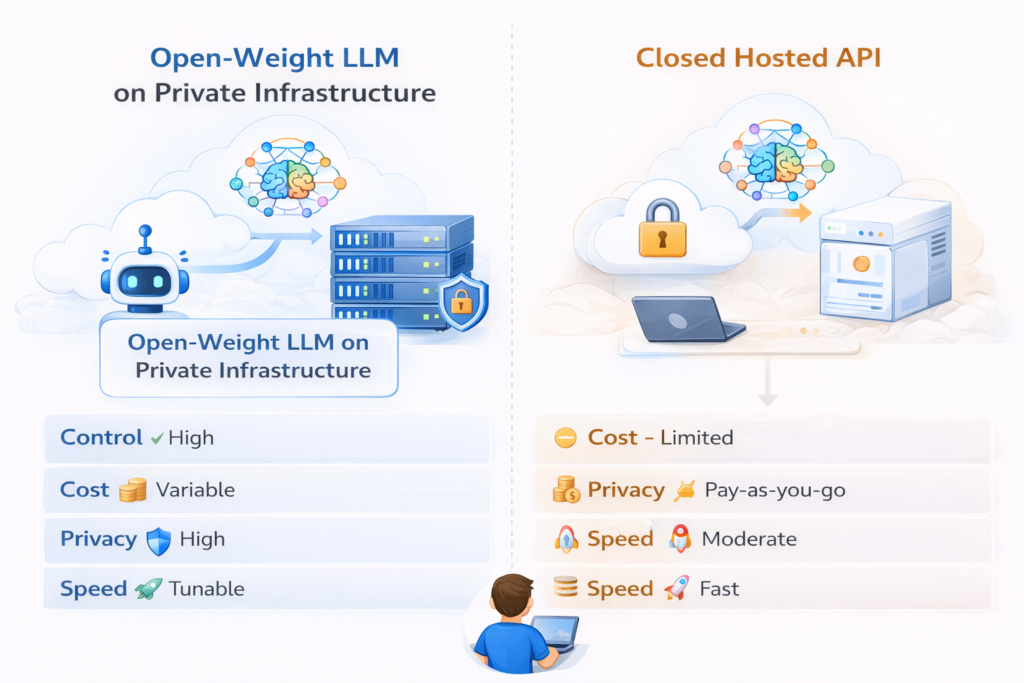

Open source LLMs and closed models solve different problems. In general, open-weight models give you more control, customization, and deployment flexibility, while closed models usually offer easier access, strong managed infrastructure, and faster path-to-production through hosted APIs. In 2026, that trade-off matters more than ever because both camps are now strong enough for real applications.

In simple terms

If you want to run a model on your own infrastructure, fine-tune it deeply, or keep tighter control over data residency, open-weight models are often the better fit. If you want the latest hosted capabilities, simpler scaling, and less operational overhead, closed models are often the easier choice.

First, an important clarification

In AI, “open source” is often used loosely. Many popular models described as open are more accurately called open-weight models, meaning their weights are available to download and run, but licensing, usage terms, and training-data transparency may still differ from traditional open-source software. OpenAI describes gpt-oss as open-weight and available under Apache 2.0, Google describes Gemma as open models with open weights, and Meta positions Llama as openly available with downloadable weights.

That distinction matters because “open source” can imply more openness than a model actually provides. For most buyers and builders, the practical question is simpler: Can I run and customize this model myself, or do I mostly access it through someone else’s platform?

What counts as a closed Models ?

A closed model is usually accessed through a hosted API or managed product rather than by downloading model weights. OpenAI’s latest hosted models are available through the OpenAI API, and Anthropic documents Claude as available through its API and partner platforms. That model-delivery approach gives users access to managed infrastructure, updates, and platform tools without self-hosting the underlying weights.

What counts as an open Models ?

An open or open-weight model usually lets you download weights, run inference on infrastructure you control, and often fine-tune or adapt the model for your own use case. Examples include Meta’s Llama family, Google’s Gemma family, Mistral’s open-weight releases, and OpenAI’s gpt-oss models. These vendors explicitly position such models around customization, self-hosting, or on-prem deployment.

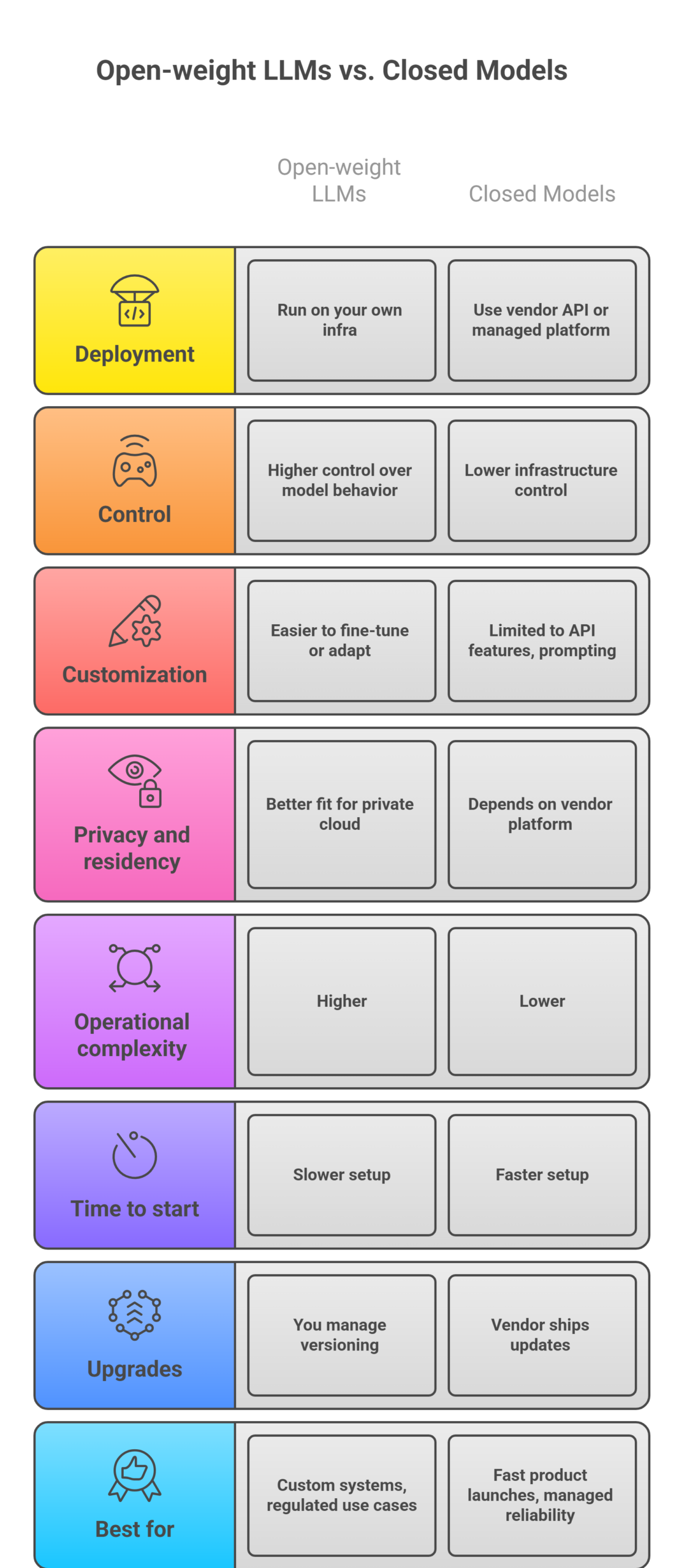

Open source LLMs vs closed models: comparison table

| Factor | Open-weight LLMs | Closed models |

| Deployment | Run on your own infra or chosen host | Use vendor API or managed platform |

| Control | Higher control over model behavior and hosting | Lower infrastructure control |

| Customization | Often easier to fine-tune or adapt deeply | Usually limited to API features, prompting, or hosted fine-tuning |

| Privacy and residency | Better fit for private cloud or on-prem needs | Depends on vendor platform and controls |

| Operational complexity | Higher | Lower |

| Time to start | Slower setup | Faster setup |

| Upgrades | You manage versioning and migration | Vendor ships updates |

| Best for | Custom systems, regulated use cases, cost tuning at scale | Fast product launches, managed reliability, latest hosted features |

This is the core decision framework most teams should use.

Where open-weight models win

- Open-weight models win when control is the priority. OpenAI says gpt-oss can run on infrastructure you control, Google says Gemma models support tuning and deployment in your own projects, and Mistral emphasizes building and deploying AI “anywhere” with open models. That makes open-weight options attractive for companies with stricter privacy, latency, cost, or customization requirements.

- They also win when you want deep workflow ownership. If you need custom guardrails, specialized domain tuning, private deployment, or lower inference costs after setup, open-weight models can be a better long-term fit. Meta explicitly says Llama weights are downloadable and customizable, while Google and Mistral position their open models for adaptation and self-managed deployment.

Where closed models win

- Closed models usually win on convenience and managed capability. With OpenAI or Anthropic, you can start through an API, use the vendor’s hosted infrastructure, and avoid running large-scale inference yourself. Anthropic also documents direct API access and platform-managed features, while OpenAI’s model docs focus on using hosted models through the platform.

- They also tend to be easier for fast-moving product teams. If your goal is to ship quickly, experiment with minimal ops work, and use the newest hosted reasoning or multimodal capabilities, closed models are often the cleaner path. That is especially true for startups or internal teams that do not want to own GPU capacity, deployment pipelines, and model operations. This is an inference from how these vendors package access primarily through managed APIs and hosted tooling

.Real examples in 2026

On the open side, current examples include Llama, Gemma, Mistral open-weight models, and OpenAI’s gpt-oss. Meta highlights downloadable Llama weights, Google describes Gemma as open models with open weights and commercial use allowances, Mistral lists open-weight models under Apache 2.0, and OpenAI positions gpt-oss as open-weight reasoning models for self-hosting.

On the closed side, current examples include OpenAI hosted API models and Anthropic Claude models, both primarily accessed as managed services through vendor APIs and platforms.

Which one should you choose?

Choose an open-weight model when:

- you need on-prem or private-cloud deployment

- data residency and control matter

- you want deeper customization

- you are prepared to manage infra and evaluation

- long-run cost optimization matters more than setup speed

Choose a closed model when:

- you want the fastest path to production

- your team does not want to manage model hosting

- you value managed reliability and platform tooling

- you need easy access to frontier hosted capabilities

Those are the most practical decision signals for commercial buyers.

Common mistakes teams make

- The biggest mistake is treating this as an ideology question. It is usually an operations question. Some teams choose open models for “freedom” but underestimate deployment and monitoring work. Others default to closed APIs without checking whether private deployment, data controls, or long-term cost would matter more.

- Another mistake is assuming “open source” automatically means cheaper. Open-weight models may reduce licensing dependence, but infrastructure, tuning, serving, and maintenance still cost real money. Closed APIs may look expensive per token, but they remove a lot of engineering overhead. This is an inference from the hosting-versus-self-management trade-off documented by vendors.

Suggested Read

- What Is a Large Language Model? Explained Simply

- How LLMs Work: Tokens, Context, and Inference

- Best LLMs for Coding in 2026

- What Is RAG in AI? A Beginner-Friendly Guide

FAQ: Open Source LLMs vs Closed Models

- Are open source LLMs really open source?

Sometimes, but often they are more accurately described as open-weight models. The practical difference is that weights may be downloadable even when training data, licensing terms, or other components are not fully open. - Are closed models better than open models?

Not always. Closed models are often easier to use and faster to deploy, while open-weight models are often better for control, customization, and self-hosting. - Which is better for enterprises with privacy needs?

Open-weight models are often a stronger fit when enterprises need tighter infrastructure control or private deployment. - Which is better for startups?

Closed models are often better when speed matters more than infrastructure ownership, though this depends on product type and team capability. That is an inference from the managed API model used by major vendors.

Final takeaway

Open source LLMs vs closed models is not really about which side is universally better. It is about what you need most. Open-weight models are better when control, customization, and private deployment matter. Closed models are better when speed, simplicity, and managed infrastructure matter. The right choice depends less on hype and more on your workflow, compliance needs, and engineering capacity.